Notes for Number Theory

© 2019 Brian Heinold Here is a pdf version of the book.

Here are the notes I wrote up for a number theory course I taught. The notes cover elementary number theory but don't get into anything too advanced. My approach to things is fairly informal. I like to explain the ideas behind everything without getting too formal and also without getting too wordy.

If you see anything wrong (including typos), please send me a note at heinold@msmary.edu.

One of the most basic concepts in number theory is that of divisibility. The concept is familiar: 14 is divisible by 7, even numbers are divisible by 2, prime numbers are only divisible by themselves and 1, etc. Here is the formal definition:

For example, 20 is divisible by 4 because we can write 20 = 4 · 5; that is n = dk, with n = 20, d = 4, and k = 5. The equation n = dk is the key part of the definition. It gives us a formula that we can associate with the concept of divisibility.

This formula is handy when it comes to proving things involving divisibility. If we are given that n is divisible by d, then we write that in equation form as n = dk for some integer k. If we need to show that n is divisible by d, then we need to find some integer k such that n = dk.

Here are a few example proofs:

Proof. Even numbers are divisible by 2, so we can write n = 2k for some integer k. Then n2 = (2k)2 = 4k2, which we can write as n2 = 2(2k2). We have written n2 as 2 times some integer, so we see that n2 is divisible by 2 (and hence even).◻

Proof. Since a ∣ b and b ∣ c we can write b = aj and c = bk for some integers j and k.**Note that we must use different integers here since the integer j that works for a ∣ b does not necessarily equal the integer k that works for b ∣ c. Plug the first equation into the second to get c = (aj)k, which we can rewrite as c = a(jk). So we see that a ∣ c, since we have written c as a multiple of a. ◻

Proof. Since a ∣ b, we can write b = ak for some integer k. Multiply both sides of this equation by c to get (ac)k = bc. This equation tells us that ac ∣ bc, which is what we want.◻

Here are a couple of other divisibility examples:

Solution. All we need is a single counterexample. Setting a = 5, b = 3, and c = 7 does the trick.

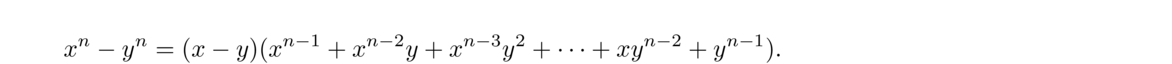

Solution. The only answers are 593 and 607. It would be tedious to find them by checking divisors starting with 2, 3, etc. A better way is to use the algebraic fact x2–y2 = (x–y)(x+y). This fact is very useful in number theory.

With a little cleverness, we might notice that 359951 is 360000–49, which is 6002–72. We can factor this into (600–7)(600+7), or 593 × 607.

The division algorithm, despite its name, it is not really an algorithm. It states that when you divide two numbers, there is a unique quotient and remainder. Specifically, it says the following:

The integers q and r are called the quotient and remainder. For example, if a = 27 and b = 7, then q = 3 and r = 6. That is, 27 ÷7 is 3 with a remainder of 6, or in equation form: 27 = 7 · 3+6. The proof of the theorem is not difficult and can be found in number theory textbooks.

One of the keys here is that the remainder is less than b. Here are some consequences of the theorem:

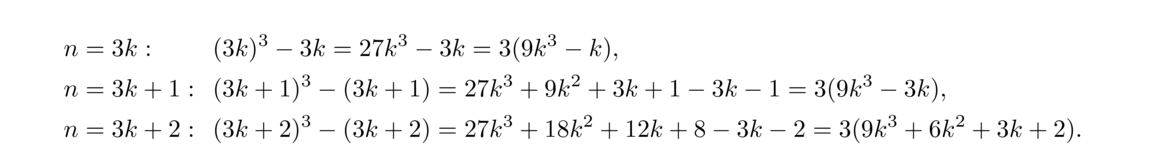

We see that in each case, n3–n is divisible by 3. By the division algorithm, these are the only cases we need to check, since every integer must be of one of those three forms.

Proof. Every integer n is of the form 2k or 2k+1.

If n = 2k, then we have n2 = 4k2, which is of the form 4k.**We are being a bit informal here with the notation. When we say the number is of the form 4k, the k is different from the k used in n = 2k. What we're really saying here is that the number is of the form 4 times some integer. A more rigorous way to approach this might be to let n = 2j, compute n2 = 4j2 and say n2 = 4k, with k = j2.

If n = 2j+1, then we have n2 = 4k2+4k+1 = 4(k2+k) + 1, which is of the form 4k+1.

◻

Proof. Every integer is of the form 6k, 6k+1, 6k+2, 6k+3, 6k+4, or 6k+5. An integer of form 6k is divisible by 6. A integer of the form 6k+2 is divisible by 2 as it can be written as 2(3k+1). Similarly, an integer of the form 6k+3 is divisible by 3 and an integer of the form 6k+4 is divisible by 2. None of these forms can be prime (except for the integers 2 and 3, which we exclude), so the only forms left that could be prime are 6k+1 and 6k+5.◻

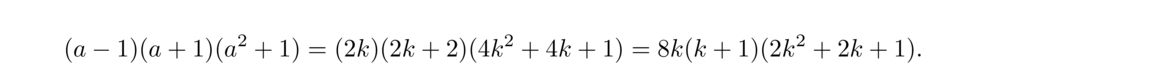

Proof. We will start by writing a4+b4–2 as (a4–1) + (b4–1). Let's take a look at the a4–1 term. We can factor that into (a2–1)(a2+1) and further into (a–1)(a+1)(a2+1). Since we know a is odd, we can write a = 2k+1 and we have

So we have that a4–1 is divisible by 16. A similar argument tells us that b4–1 is divisible by 16, and from there we get that a4+b4–2 is divisible by 16, since it is the sum of two multiples of 16.

◻

Proof. First, note that the square of an even is even and the square of an odd is odd since (2k)2 = 2(2k2) is even and (2k+1)2 = 2(2k2+2k)+1 is odd. In particular, if an integer a2 is even, then a is even as well.

Suppose √2 = p/q, with p and q positive integers. By clearing common factors, we can assume the fraction is in lowest terms. Multiply both sides by q and square both sides to get 2q2 = p2. This tells us that 2 ∣ p2. Thus 2 ∣ p by the statement above, and we can write p = 2k for some integer k. So we have 2q2 = (2k)2, which simplifies to q2 = 2k2. This tells us that 2 ∣ q2 and hence 2 ∣ q. But this is a problem because p and q both have a factor of 2 and p/q is supposed to already be in lowest terms. So we have a contradiction, which shows that it must not be possible to write √2 as a ratio of integers.

It is not to hard to extend this result to show that n√m is irrational unless m is a perfect nth power.

The second example above is sometimes useful when working with perfect squares. We record it as a theorem:

For example, 3999 is not a perfect square since it is 4000–1, which is of the form 4k–1 (same as a 4k+3 form). On the other hand, just because something is of the form 4k+1 does not mean it is a perfect square. For instance, 41 is of the form 4k+1, but isn't a perfect square.

As another example, 3n2–1 is not a perfect square for any integer n. To see this, break the problem into two cases: n = 2k and n = 2k+1. If n = 2k, then 3n2–1 = 3(4k2)–1 = 4(3k2)–1. If n = 2k+1, then 3n2–1 = 3(4k2+4k+1)–1 = 4(3k2+3k)+2. Neither of these are of the form 4k or 4k+1, so they are not perfect squares.

Similar results to the theorem above can be proved for other integers. For instance, every perfect square is of the form 5k, 5k+1, or 5k+4.

Note that we can also take the remainders to be in other ranges besides from 0 to b–1. The most useful range is from –b/2 and b/2. For instance, with b = 3, we can also write every integer as being of the form 3k–1, 3k, or 3k+1. An integer of the form 3k–1 is also of the form 3k+2. As another example, we can write every integer in one of the forms 6k–2, 6k–1, 6k, 6k+1, 6k+2, or 6k+3.

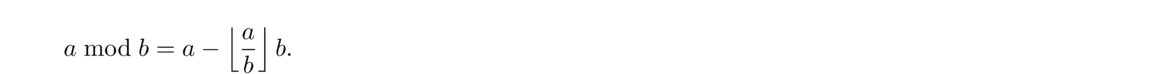

In grammar school, the remainder always seemed to me to be an afterthought, but in higher math, it is quite useful and important. It is built into most programming languages, usually with the symbol mod or %

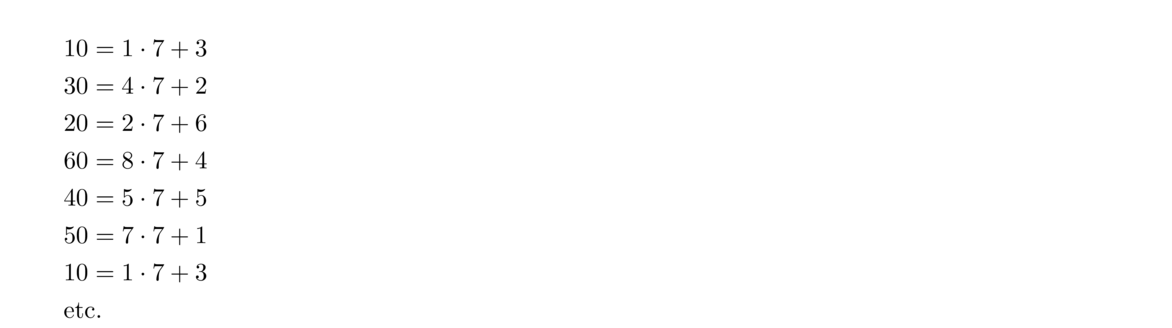

For example, suppose we want to find 68 mod 7. The definition above tells us to find the nearest multiple of 7 less than n and subtract. The closest multiple of 7 less than 68 is 63, and 68–63 = 5. So 68 mod 7 = 5.

This procedure applies to negatives as well. For instance, to compute –31 mod 5, the closest multiple of 5 less than or equal to -31 is -35, which is 4 away from -31, so –31 mod 5 = 4, or –31 ≡ 4 (mod 5).

As one further example, suppose we want 179 mod 18. 179 is one less than 180, a multiple of 18, so it leaves a remainder of 18-1 = 17. So 179 mod 18 = 17.

A nice way to compute mods mentally or by hand is to use a streamlined version of the grade school long division algorithm. For example, suppose we want to compute 34529 mod 7. Here is the procedure:

The end result is 34529 mod 7 = 5.

For example, the gcd of 24 and 96 is 12, since 12 is the largest integer that divides both 24 and 96. As another example, the gcd of 18 and 25 is 1. Those numbers have no divisors in common besides 1.

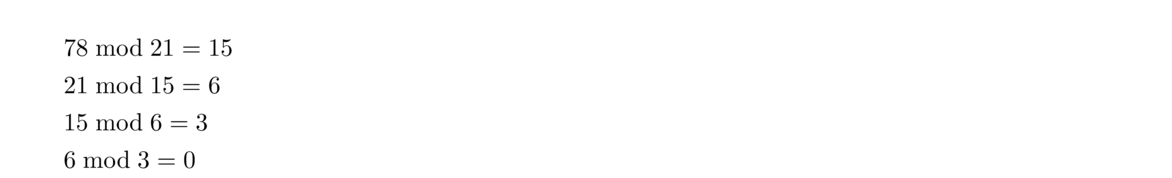

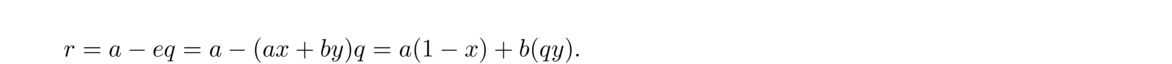

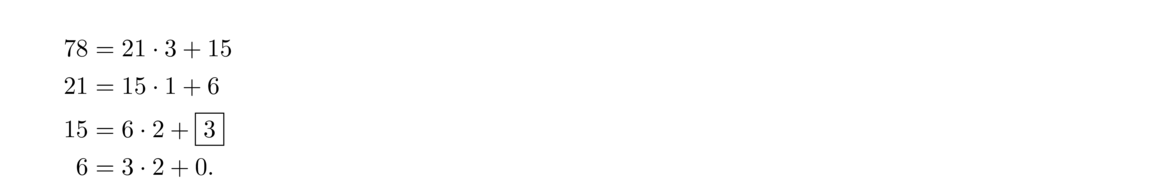

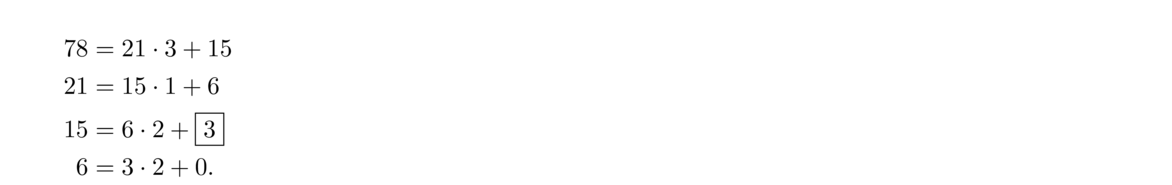

To find the gcd of two integers, the Euclidean algorithm is used. We'll start with an example, finding gcd(21, 78):

In general, to find gcd(a, b), assume a ≤ b, and compute

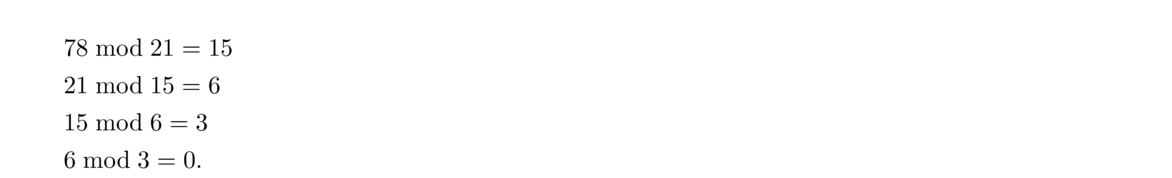

The reason it works is that the common divisors of a and b are exactly the same as the common divisors as a and b mod a, so their gcds must be the same. Because of this, when we apply the Euclidean algorithm, the gcd of the two numbers on the left side stays constant all the way through the algorithm. For example, when we compute gcd(21, 78), we get the following:

It is worth showing why the common divisors of a and b are the same as the common divisors as a and b mod a. First, when we apply the division algorithm to a and b, we get b = aq + r, where r = b mod a. If a and b are both divisible by some common divisor d, then r = b–aq will be as well, since we can factor d out of the right side. On the other hand, if a and r are both divisible by some common divisor d, then b = r–aq will be as well, since we can factor d out of the right side.

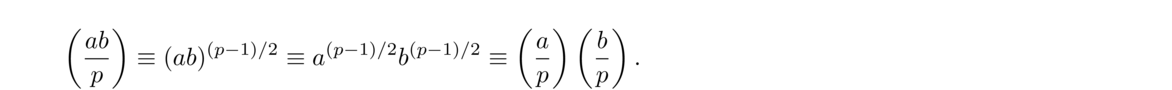

In number theory, a linear combination of the integers a and b is an expression of the form ax+by for some integers x and y. For example, a linear combination of a = 6 and b = 15 is an expression of the form 6x+15y, like 6(1)+15(2) = 36 or 6(4)+15(–1) = 9. Linear combinations are important in a variety of contexts.

Linear combinations have a close connection with the gcd. Suppose we want to know if it is possible to write 4 as a linear combination of 6 and 15. That is, can we find integers x and y such that 6x+15y = 4? The answer is no, since the left side is a multiple of 3 (namely 3(2x+5y)), but the right side is not a multiple of 3. By the same reasoning, in general, if c is not a multiple of gcd(a, b), then it is impossible to write c as a linear combination of a and b.

What about multiples of the gcd? Is it always possible to write ax+by = c if c is a multiple of the gcd? The answer is yes. We just have to show how to write the gcd as a linear combination of a and b. Once we have this (see the proof below for how), we can then multiply through to get c. For instance, suppose we want to find x and y such that 6x+15y = 21. We have gcd(6, 15) = 3 and it is not hard to find that 6(3)+15(–1) = 3. If we multiply through by 7, we get 6(21)+15(–7) = 21. So it just comes down to writing the gcd as a linear combination.

Here is a formal statement of the above along with a proof:

We now show that it is possible to write d as a linear combination of a and b. Start by letting e be the smallest positive linear combination of a and b. We need to show that e = d.

Finally, if c is a multiple of d (say c = dk for some integer k), and we have integers x and y such that ax+by = d, then we can multiply through by k to get a(kx)+b(ky) = c.

In particular, we have the following important special case:

This fact is useful when working with gcds because it gives us an equation to work with. On the other hand, be careful. Just because we can write ax+by = c, that does not mean c = gcd(a, b). All we are guaranteed is that c is a multiple of gcd(a, b).

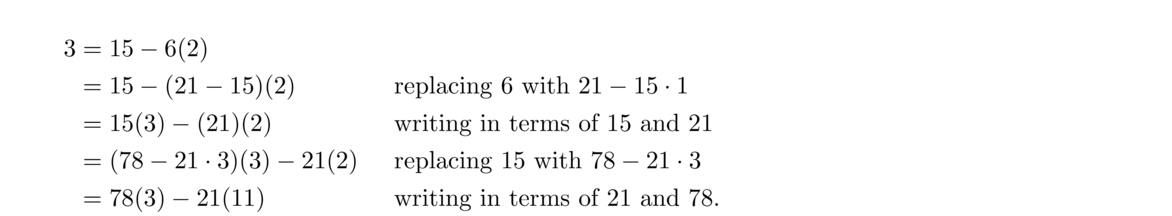

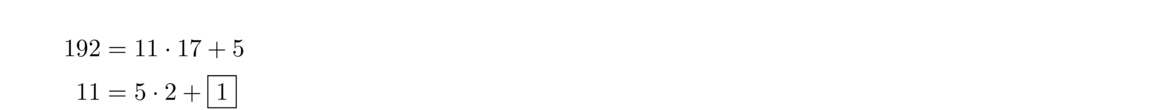

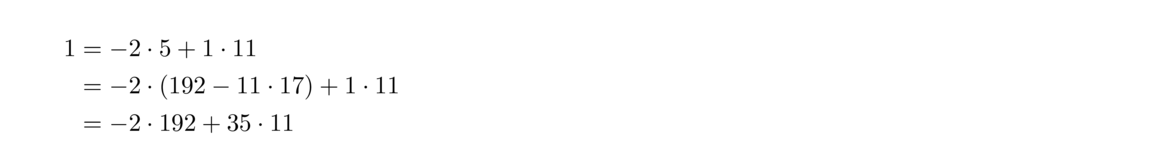

Theorem 3 tells us that the gcd is a linear combination, but it doesn't tell us how to find that linear combination. Being able to find that linear combination is important in a number of contexts. The trick is to use the Euclidean algorithm in a particular way.

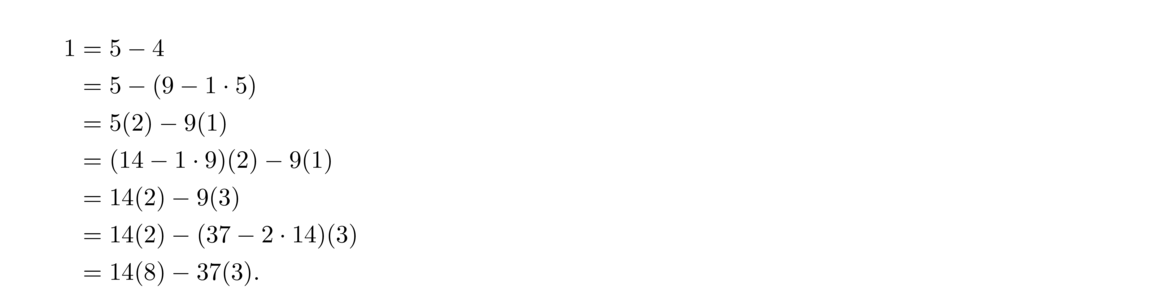

In the earlier example when we found gcd(21, 78) using the Euclidean algorithm, we used the modulo operation. Written out fully using the division algorithm, the Euclidean algorithm on this example is as follows:

We can use this sequence of steps to find the linear combination by working backwards. Start with the second-to-last equation from the Euclidean algorithm and work back up in the following way:

Note that at each step we can check our work by making sure the expression equals the gcd. For instance, in the third line above, 15(3)–21(2) = 45–42 = 3.

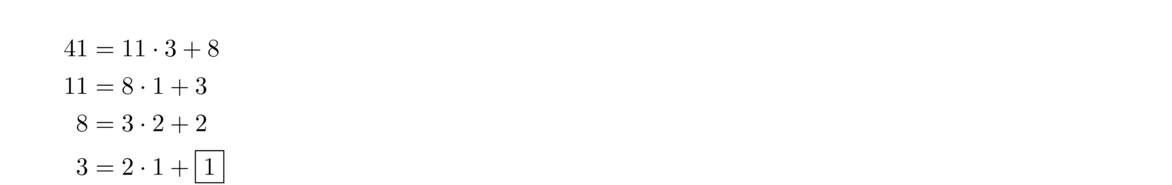

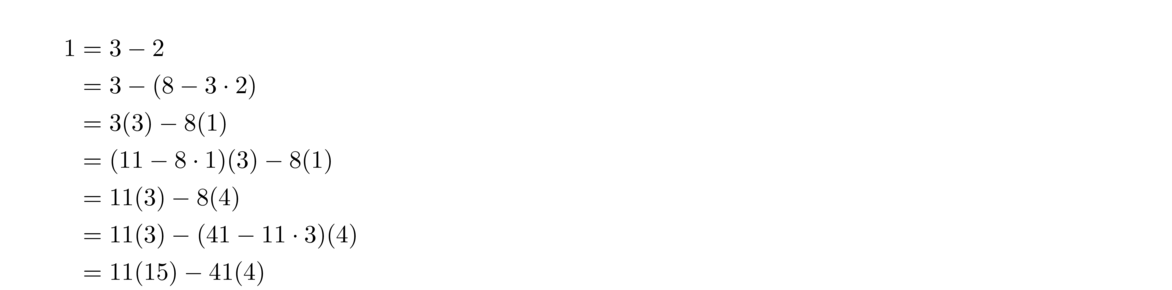

Here is another example. Suppose we want to find integers such that 11x+41y = 1. Start with the Euclidean algorithm:

Thus we have x = 15 and y = –4 that give us 11x+41y = 1.

This algorithm is called the extended Euclidean algorithm. It turns out to have a number of important uses, as we will see.

Here is a short Python program implementing a version of it. This is a streamlined version of the algebra above, based on the algorithm given at http://en.wikipedia.org/wiki/Extended_Euclidean_algorithm.

The keys to many proofs involving gcds are as follows:

Here are a few example proofs involving gcds:

Proof. The basic idea here is intuitively clear: If we divide through by the gcd, then what's left should not have factors in common, since all the common factors should be in the gcd.

Here is a more algebraic approach: Start by writing ax+by = d for some integers x and y. We can then divide through by d to get (a/d)x + (b/d)y = 1. Theorem 3 tell us that gcd(a/d, b/d) divides any linear combination of a/d and b/d. So gcd(a/d, b/d) is a divisor of 1, meaning it must equal 1.◻

Proof. Write c = aj, c = bk, and d = ax+by for some integers j, k, x, and y. Multiply the last equation through by c to get cd = acx+bcy. Then plug in c = bk into the acx term and c = aj into the bcy term to get cd = abkx + bajy. So we have cd = ab(kx+jy), showing that ab ∣ cd.◻

Proof. Let d = gcd(a, b) and d′ = gcd(ka, kb). We can write d = ax+by for some integers x and y. Multiplying through by k gives kd = kax+kby. This is a linear combination of ka and kb, so we know that d′ ∣ kd. On the other hand, we can write d′ = kax′+kby′ for some integers x′ and y′. Divide through by k to get d′/k = ax′+by′. This is a linear combination of a and b, so d ∣ d′/k, or kd ∣ d′. Since d′ ∣ kd and kd ∣ d′, and k > 0, we must have d′ = kd.◻

Proof. Let d1 = gcd(a, c) and d2 = gcd(b, c). From these, we can write ax1+cy1 = d1 and bx2+cy2 = d2 for some integers x1, x2, y1, and y2. Also, since c ∣ (a–b) we can write a–b = ck for some integer k, which we can solve to get a = ck+b and b = a–ck. Plugging the former into the equation for d1 gives (ck+b)x1+cy1 = d1, which we can write as c(kx1+y1)+bx1 = d1. The left hand side is a linear combination of b and c, so it is a multiple of d2 = gcd(b, c). Thus d2 ∣ d1. Plugging b = a–ck into the equation for d2 and doing a similar computation gives d1 ∣ d2. Thus d1 = d2.

◻

A relative of the gcd is the least common multiple.

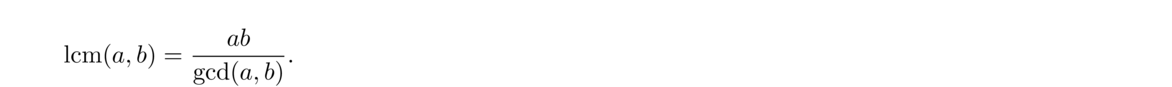

The following gives an easy way to find the lcm:

Here an example: If a = 14 and b = 16, then gcd(a, b) = 2, ab = 224 and lcm(a, b) = 224/2 = 112. A simple way to think of this theorem is that ab is a multiple of both a and b, but it has some redundant factors in it. Dividing out by gcd(a, b) removes all of the redundancies, leaving the smallest possible common multiple. Here is a formal proof of the theorem:

First, since d is divisor of a and b, we can write a = dk and b = dj. Then ab/d = aj and ab/d = bk, which shows that ab/d is a multiple of both a and b.

Next, let m be any common multiple of a and b. So we have m = as and m = bt for some integers r and s, and we can write 1/a = s/m and 1/b = t/m.

We can also write d as a linear combination d = ax+by for some integers x and y. Dividing both sides of this equation by ab, we get dab = xb + ya. Plugging in our earlier equations for 1/a and 1/b gives dab = xtm + ysm, which we can rewrite as m = (xt+ys)abd. This tells us that ad/b is a divisor of m.

Since m is an arbitrary multiple of a and b, and ab/d divides it, that means that ab/d is the smallest possible multiple, which is what we wanted to prove.

There are other ways to find lcm(a, b). One way would be to list all the multiples of a and all the multiples of b and find the first one they have in common. This, however, is slow unless a and b are small. Another way uses the prime factorization. See Section 2.1 for an example.

Here is a simple example where the lcm is useful. Suppose one thing happens every 28 days and other happens every 30 days, and that both things happened today. When will they both happen again? The answer is lcm(28, 30) = 420 days from now.**A more general approach to handling these kinds of cyclical problems is covered in Section 3.10.

As another example, some people theorize that the timing of periodic cicadas has to do with the lcm. Some species of cicadas only emerge every 13 years, while others emerge every 17 years. People have noticed that these are values are both prime. This means that the lcm of these numbers with other numbers is relatively large. The things that eat cicadas are often on a boom-bust cycle, and it would be bad for cicadas to emerge in a year when there are a lot of predators. Suppose a certain predator is on a 4-year cycle. How often would a boom year coincide with a 13-year cicada emergence? The answer is every lcm(4, 13) = 52 years. On the other hand, if cicadas were on say a 14-year cycle, then it would happen every 28 years. It would also be bad for both the 13-year and the 17-year cicadas to emerge at once, since they would be competing for resources. But this will only happen every lcm(13, 17) = 191 years.

In other words, a and b are relatively prime provided they have no divisors in common besides 1 (or maybe -1 if they are negative). There are a number of facts in number theory that are only true for relatively prime integers. Here is one useful fact that follows quickly from Theorem 3:

One of the most useful tools in number theory is the following result:

For example, if c ∣ (5 × 12), and c has no factors in common with 5, then in order for it to divide 5 × 12 = 60, it must divide 12. On the other hand, if a and c do have factors in common besides 1, then the result might not hold. For instance, if a = 10, then 10 ∣ (5 × 12), but 10 ∤ 12.

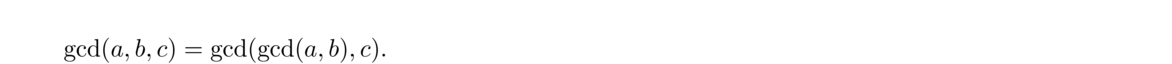

The concept of gcd can be applied to more than two integers. Namely, gcd(a1, a2, …, an) is the largest integer that divides each of the ai. For instance, gcd(24, 36, 60) = 12. The gcd can be computed from the Euclidean algorithm and the following fact:

It is also possible to compute the gcd by extending the ideas of the Euclidean algorithm. We perform several modulos at each step, always modding by the smallest value. Here is some (slightly tricky) Python code implementing these ideas:

One thing to be careful of is that it is possible to have gcd(a, b, c) = 1, but not have gcd(a, b) and gcd(b, c) both equal to 1. For instance, gcd(2, 4, 5) = 1 since 1 is the largest integer dividing 2, 4, and 5, but gcd(2, 4)≠ 1.

For many theorems that require a bunch of integers, a1, a2, …, an to not have any factors in common, instead of requiring gcd(a1, a2, …, an) = 1, often the following notion is used:

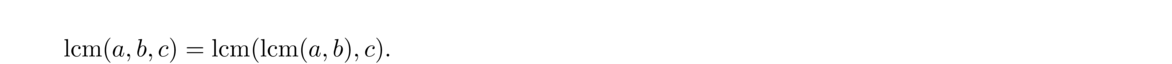

The lcm of integers a1, a2, …, an is the smallest integer that is a multiple of each of the ai. Similarly to the gcd, the lcm can be computed by using the rule below to break things down.

Here is a list of facts that might come in handy from time to time. It's not worth memorizing this list, but it may be useful to refer back to if you need a certain fact for something you are working on.

Most important facts

Divisibility

Gcds

Prime numbers are one of the main focuses of number theory.

Notice that 1 is not considered to be prime. The reason is that primes are thought of as fundamental building blocks of numbers. As we will soon see, every number is a product of primes, each prime helping to build up the number. However, 1 doesn't do any building, as multiplying by 1 doesn't accomplish anything. There are other ways in which 1 behaves differently from prime numbers, and for these reasons 1 is not considered prime.

The first few primes are 2, 3, 5, 7, 11, 13, 17, 19. Much of number theory is concerned with the structure of the primes—how frequent they are, gaps between them, whether there is any sort of pattern to them, etc.

Recall Euclid's lemma from Section 1.9. It states that if c ∣ ab and gcd(c, a) = 1, then c ∣ b. If a is prime, then gcd(c, a) = 1, so Euclid's lemma holds whenever p is prime. Here is Euclid's lemma restated for primes:

Euclid's lemma could be used as an alternate definition for prime numbers as it is not too hard to show that no other number besides 1 has this property. In fact, Euclid's lemma is used to define analogs of prime numbers (like prime ideals) in abstract algebra.

Using induction, Euclid's lemma can be extended as follows:

A direct consequence of this is the following:

Euclid's lemma is one of the most important tools in elementary number theory and we will see it appear again and again.

In math there are a number of “fundamental theorems.” There is the fundamental theorem of algebra which states that every nonconstant polynomial has a root, the fundamental theorem of calculus that relates integration to differentiation, and in number theory, there is the fundamental theorem of arithmetic, which states that every integer greater than 1 can be factored uniquely into primes. For instance, we can factor 60 into 2 × 2 × 3 × 5 and there is no other product of primes equal to 60, other than changing the order of 2 × 2 × 3 × 5. Here is the formal statement of the theorem:

Here is intuitively why we can write n as a product of primes: Either n itself is prime (in which case we are done) or else n can be factored into a product ab. These integers are either prime or they themselves can be factored. These new factors are in turn either prime or they can be factored. We can continue this process, but eventually it must stop since the factors of a number are smaller than the number itself, and things can't keep getting smaller forever. This can be made formal using induction.

To show the representation is unique, suppose n = p1p2… pk and n = q1q2… qm are two different representations. From the first representation, we have p1 ∣ n, and so p1 ∣ q1q2… qm. By Corollary 10, we must have p1 = qi for some i. By rearranging terms, we can assume i = 1, so p1 = q1. By the same argument, we can similarly conclude that p2 = q2, p3 = q3, etc. Thus the two representations are the same.

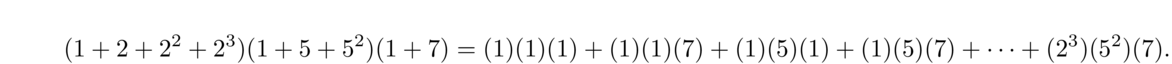

In the factorization, some of the primes may be the same, like in 720 = 2 × 2 × 2 × 2 × 3 × 3 × 5. We can gather those factors up and write the factorization as 23 · 32 · 5. In general, we can always write an integer n > 1 as a unique product of the form pe11pe22… pekk.

To get the gcd, we go prime-by-prime through the two representations, always taking the lesser of the two amounts. For instance, both 168 and 180 have a factor of 2: 168 has 23 and 180 has 22, and so we use the lesser factor, 22, in the gcd. Moving on to the factor 3, 168 has 31 and 180 has 32, so we take 31. The next factors are 5 and 7, but they are not common to both 168 and 180, so we ignore them. We end up with gcd(168, 180) = 22 · 3 = 12.

The lcm is done similarly, except that we always take the larger amount. We get lcm(168, 180) = 23 · 32 · 5 · 7 = 2520.

Using the prime factorization to find the gcd and lcm is fast if we have the factorization is available. However, finding the prime factorization is a slow process for large numbers. The Euclidean algorithm is orders of magnitude faster.**A little more formally, the Euclidean algorithm's running time grows linearly with the number of digits in the number, whereas the running times of the fastest known factoring algorithms grow exponentially with the number of digits.

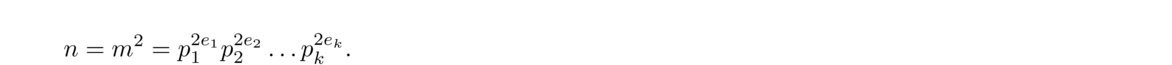

We can write n = m2 for some m and assume m has the prime factorization m = pe11pe22… pekk. Then

Since a ∣ c, every term, peii, in the factorization of a occurs in the factorization of c. Similarly, since b ∣ c, every term in the factorization of b occurs in the factorization of c. Since gcd(a, b) = 1, the primes in the factorization of a must be different from the primes in the factorization of b. Thus every term in the factorization of ab occurs in the factorization of c. So ab ∣ c.

An alternate way to do the above proof would be to assume that there were only finitely many primes and to use the process above to derive a contradiction. A quick web search will turn up dozens of other interesting proofs of the infinitude of primes.

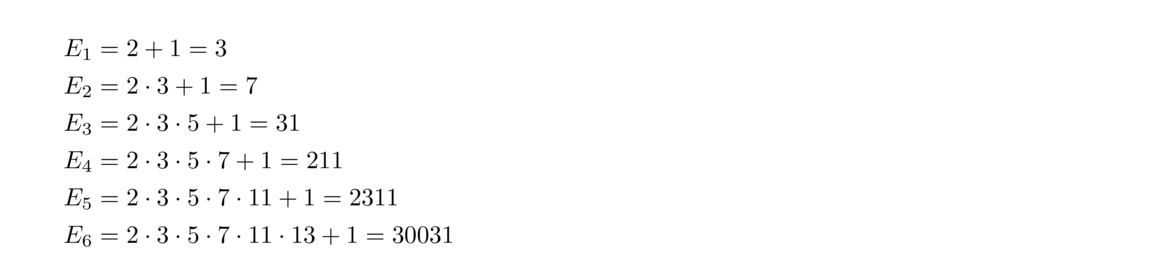

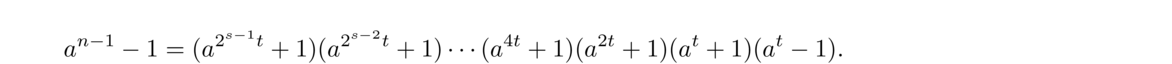

It is worth noting that the integer P in the proof above need not be prime itself. It only needs to be divisible by a new prime. Numbers of the form given in the proof above are sometimes called Euclid numbers or primorials. The first few are

One of the simplest ways to check if a number is prime is trial division. Just check to see if it is divisible by any of the integers 2, 3, 4, etc.

When considering divisors of n, they come in pairs, one less than or equal to √n and the other greater than or equal to √n. For instance, if n = 30, we have √30 ≈ 5.47 and we can write 30 as 2 × 15, 3 × 10, and 5 × 6, with 2, 3, and 5 less than √30 and 6, 10, 15 greater than √30. So, in general, we can stop checking for divisors at √n.

This process can be made more efficient by just checking for divisibility by 2 and by odd numbers, or better yet, checking for divisibility only by primes (provided the number is small or we have a list of primes). For example, to check if 617 is prime, we have √617 = 24.84 and we check to see if it is divisible by 2, 3, 5, 7, 11, 13, 17, 19, and 23. It is not divisible by any of those, so it is prime.

This approach is reasonably fast for small numbers, but for checking the primality of larger numbers, like ones with several hundred digits, there are faster techniques, which we will see later.

To find all the primes less than an integer n, we can use a technique called the sieve of Eratosthenes. Start by listing the integers from 2 to n and cross out all the multiples of 2 after 2. Then cross out all the multiples of 3 after 3. Then cross out all the multiples of 5 after 5. Note that we don't have to cross out the multiples of 4 since they have all already been crossed out as they are multiples of 2. We keep going, crossing out multiples of 7, 11, 13, etc., until we get to √n. At the end, only the primes will be left. Here is what we would get for n = 100:

Here is how we might code it in Python. We create a list of zeroes and ones, with a zero at index i meaning i is not prime and a one meaning i is prime. The list starts off initially with all ones and we gradually cross off all the composites.

The sieve works relatively well for finding small primes. The code above, inefficient though it may be, takes 55 seconds to find all the primes less than 108 on my laptop.

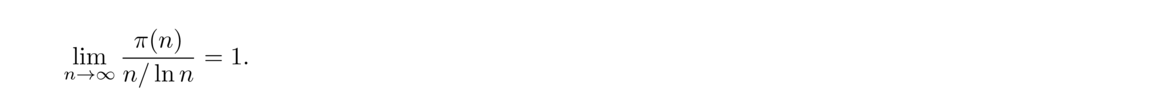

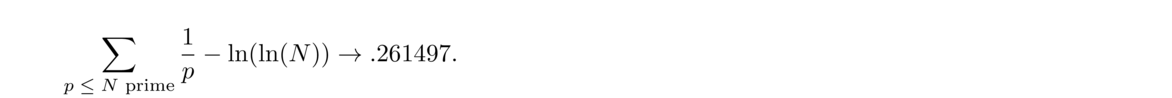

A natural question to ask is how common prime numbers are. An answer to this question and some other questions is given by the prime number theorem, which states that the number of primes less than n is roughly n/ ln n . Formally, we can state it as follows:

The theorem tells us that roughly 100/ ln n percent of the numbers less than n are prime. For n = 1,000,000, the theorem tells us that roughly 7-8% of the numbers less than 1,000,000 are prime, and that around n = 1,000,000 the average gap between primes is roughly ln(1000000) ≈ 14.

The prime number theorem is not easy to prove. The first proofs used sophisticated techniques from complex analysis. There is a proof using only elementary methods, but it is fairly complicated.

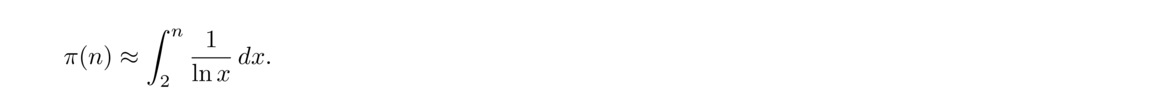

The prime number theorem tells us that the probability an integer near x is prime is roughly 1/ ln x. Summing up all these probabilities for all real x from 2 through n gives us another estimate for the number of primes less than n. Such a sum is a continuous sum (an integral), and we get the following estimate for π(n):

An interesting note about this is that for n up through at least 1014 it has been shown that Li(n) < π(n). But it turns out not to be true for all n. In fact, it was proved in 1933 that Li(n)–π(n) changes sign infinitely often. This illustrates an important lesson in number theory: just because something is true for the first trillion (or more) integers, does not mean it is true in general.

The proof is interesting in that it included one of the largest numbers to ever be used in a proof, namely that π(n) < Li(n) for some value less than eee79. More recent research has brought the bound down to e728.

Twin primes are pairs (p, p+2) with both p and p+2 prime. Examples include (5, 7), (11, 13) and (41, 43).

One of the most famous open problems in math is the twin primes conjecture, which asks if there are infinitely many twin primes, Most mathematicians think the conjecture is true, though it is considered to be a very difficult problem.

Referring back to the prime number theorem, we know that the probability an integer near x is prime is roughly 1/ ln x. Assuming independence, the probability that both x and x+2 would be prime is then 1/( ln x)2 and summing up these probabilities gives ∫n2 1( ln x)2 dx as an estimate for the number of twin primes pairs less than n. Independence is not quite a valid assumption here, but it is not too far off. It is currently conjectured that the number of twin prime pairs less than n is approximately 2C2∫n2 1( ln x)2 dx, where C2 ≈ .66016 is something called the twin prime constant.**See Section 1.2 of Prime Numbers: A Computational Perspective, 2nd edition by Crandall and Pomerance for more on this approach.

So it seems reasonable that there are infinitely many twin primes, but it has turned out to be very difficult to prove. The best result so far is that there are infinitely many pairs (p, p+2) where p is prime and p+2 is either prime or the product of two primes, proved by Chen Jingrun in 1973.

There are a number of analogous conjectures. For instance, it is conjectured that there are infinitely many pairs of primes of the form (p, p+4) or infinitely many triples of the form (p, p+2, p+6). Recent work has shown that there are infinitely many primes p such that one of p+2, p+4, … p+246 is also prime.

It is interesting to look at the gaps between consecutive primes. Here are the gaps between the first 100 primes, from 2 to 541:

1, 2, 2, 4, 2, 4, 2, 4, 6, 2, 6, 4, 2, 4, 6, 6, 2, 6, 4, 2, 6, 4, 6, 8, 4, 2, 4, 2, 4, 14, 4, 6, 2, 10, 2, 6, 6, 4, 6, 6, 2, 10, 2, 4, 2, 12, 12, 4, 2, 4, 6, 2, 10, 6, 6, 6, 2, 6, 4, 2, 10, 14, 4, 2, 4, 14, 6, 10, 2, 4, 6, 8, 6, 6, 4, 6, 8, 4, 8, 10, 2, 10, 2, 6, 4, 6, 8, 4, 2, 4, 12, 8, 4, 8, 4, 6, 12, 2, 18

We see gaps of 2 quite often. These correspond to twin primes. There are a few larger gaps, like a gap of 14 from 113 to 127 and a gap of 18 from 523 to 541. It is not too hard to find gaps that are arbitrarily large. Just find an integer n divisible by 2, 3, 4, 5, …, k and then n+2, n+3, … n+k will all be composite.

The prime number theorem tells us that the average gap between a prime p and the next prime is approximately ln p. Thus for p near 1,000,000, we would expect an average gap of about 14, and for p = 10200, we would expect an average gap of around 460.

One important result is relating to prime gaps is Bertrand's postulate.

For example, for n = 1000, we are guaranteed that there is a prime between 1000 and 2000. This is not news, but it is useful in some cases to have an interval on which you are guaranteed to have a prime, even if that interval is rather large.

The proof of Bertrand's postulate is actually not too difficult, but we won't cover it here. There are a number of improvements on Bertrand's postulate. For instance, in 1952 it was proved that for n ≥ 25 there exists a prime between n and (1+15)n. The range can be narrowed further for larger n.**In general, it has been proved that for any ε > 0 there is an integer N such that for n ≥ N, there is always a prime between n and (1+ε)n. In fact, as n → ∞, the number of primes in that range approaches ∞ as well. In math, there are many results concerning how things behave as n approaches ∞. Results of this sort are called asymptotic results.

A related, but unsolved, question is if there is always a prime between consecutive perfect squares, n2 and (n+1)2.

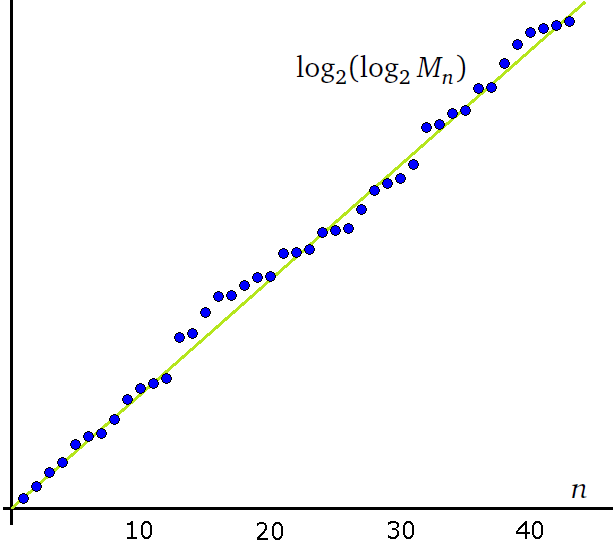

A popular sport among math enthusiasts is finding large prime numbers. The largest primes known are all Mersenne primes, which are primes of the form 2n–1. There is a relatively fast algorithm for checking if a number of the form 2n–1 is prime, and that is why people searching for large primes use these numbers. As of early 2016, the largest known prime is 274207281–1, a number over 22 million digits long.

Most of the largest primes found recently were found by the Great Internet Mersenne Prime Search (or GIMPS), where volunteers from around the world donate their spare CPU cycles towards checking for primes. Finding large primes usually involves either a combination of sophisticated algorithms and finely-tuned hardware or a distributed computer search like GIMPS.

People also look for large primes of special forms. For instance, as of early 2016, the largest known twin primes are 3756801695685 · 2666669± 1, about 200,000 digits long. See http://primes.utm.edu/largest.html for a nice list of large primes.

One of the most remarkable polynomials in all of math is p(n) = n2+n+41. It has the property that for each n = 0, 1, … 39, p(n) is prime. However, p(40) and p(41) are not prime as p(40) = 412 and p(41) = 412+41+41 is clearly divisible by 41. Still, the polynomial keeps on generating primes at a pretty high rate as p(n) is prime for 34 of the next 39 values of n. In total, 156 of the first 200 values of p(n) are prime, and 581 of the first 1000.

There are a number of other polynomials that are good at generating primes, like n2+n+11 and n2+n+17, though neither of these is quite as good as n2+n+41. The formula n2–79n+1601 generates primes for each integer from n = 0 through n = 79. It is actually a modification of n2+n+41: namely, n2–79n+1601 = (n–40)2+(n–40)+41. The 80 primes are the same as the 40 primes from n2+n+41, each appearing twice.

The site http://mathworld.wolfram.com/Prime-GeneratingPolynomial.html has a nice list of some other prime-generating polynomials.

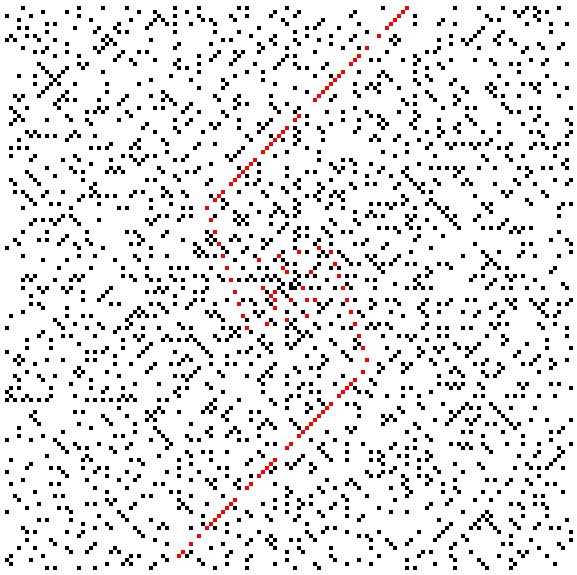

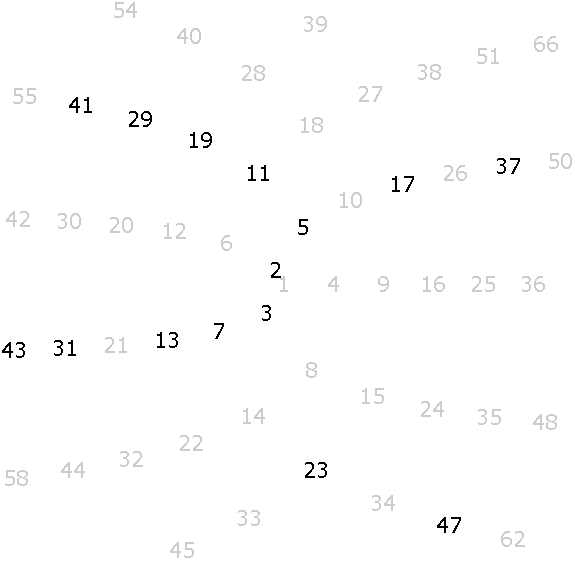

There is a nice way to visualize prime numbers known as a prime spiral or Ulam spiral. Start with 1 in the middle, and spiral out from there, like in the figure below on the left. Then highlight the primes, like on the right.

If we expand the view to the first 20,000 primes, we get the figure below.

Notice the primes tend to cluster along certain diagonal lines. The dots highlighted in red correspond to Euler's polynomial, n2+n+41.

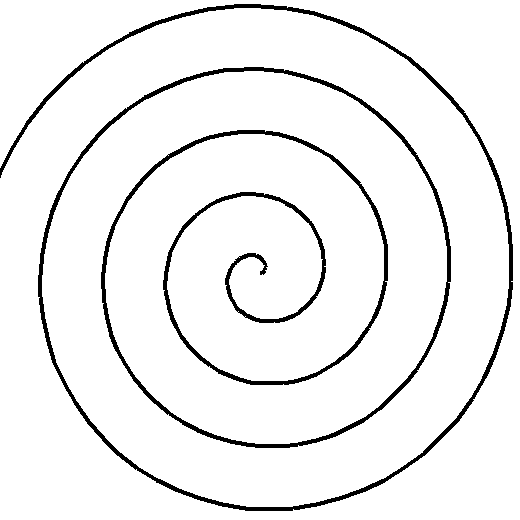

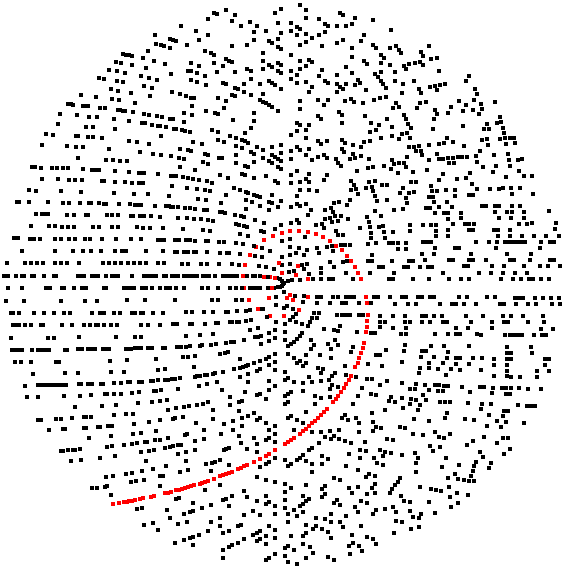

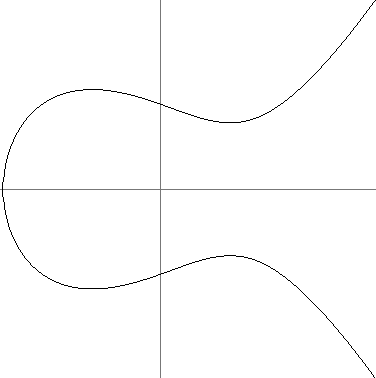

An interesting twist on this is something called the Sacks spiral. Instead of the rectangular spiral we used above, we instead spiral along an Archimedean spiral, where both the angular and radial velocity are constant, with those constants chosen so that the perfect squares lie along the horizontal axis. Here is a typical Archimedean spiral and the Sacks spiral for the first few primes:

And here it is for a wider range. Euler's polynomial, n2+n+41, is highlighted in red.

It is not too difficult to show that there is no nonconstant polynomial that can give only primes. Just plug in the constant term. For instance, if p(x) = anxn+an–1xn–1+…+a1x+a0, then every term of p(a0) is divisible by a0. Thus p(a0) will be composite, except possibly when a0 = 0 or ± 1. If a0 = ± 1, then p(0) is not prime, and if a0 = 0, factor out an x and try plugging in the new constant term.

An interesting open problem is whether or not there are infinitely many primes of the form n2+1.

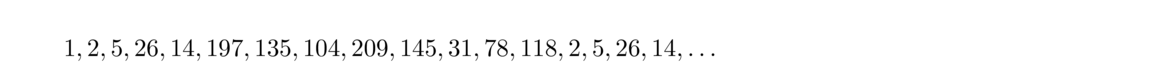

There are some other interesting formulas for generating primes. For instance, it turns out that there exists a real number r such that floor(r3n) is prime for any integer n. The exact value of r is unknown, but it is thought to be approximately 1.30637788. Another example is the recurrence xn = xn–1 + gcd(n, xn–1), x1 = 7. The difference between consecutive terms is always either 1 or a prime.

One interesting result is Dirichlet's theorem, stated below:

For example, with a = 4 and b = 3, the theorem tells us that there are infinitely many primes of the form 4k+3. If a = 100 and b = 1, the theorem tells us there are infinitely many primes of the form 100k+1 (i.e., primes that end in 01). The first few are 101, 401, 601, 701, 1201, …. Like the prime number theorem, Dirichlet's theorem is difficult to prove, relying on techniques from analytic number theory.

A related theorem is the Green-Tao theorem, proved in 2004. It concerns arithmetic progressions of primes, sequences of the form p, p+a, p+2a, … p+(n–1)a, all of which are prime. In other words, we are looking at sequences of equally spaced primes, like (3, 5, 7) or (5, 11, 17, 23, 29). The Green-Tao theorem states that it is possible to find prime arithmetic progressions of any length. The proof, like many in math, is an existence proof. It shows that these progressions exist but doesn't tell how to find them. According to the Wikipedia page on the Green-Tao theorem, as of 2010 the longest known arithmetic progression was 25 terms long, starting at the integer 43142746595714191.

In the 1600s, Pierre de Fermat studied primes of the form 22n+1. Numbers of this form are now called Fermat numbers. The first one is 220+1 = 3, and the next few are 5, 17, 257, and 65537. These are all prime, and Fermat conjectured that every Fermat number is prime. However, the next one, F5 = 4294967297, turns out to have a factor of 641.

The remarkable fact is that there are no other Fermat numbers that are known to be prime. In fact, it is an open question as to whether any other Fermat numbers are prime.

Considerable effort has gone into trying to factor Fermat numbers. This is difficult because of the shear size of the numbers. As of early 2014, F5 through F11 have been completely factored. Partial factorizations have been found for many other Fermat numbers. The smallest Fermat number which is not known to be composite is F33. See www.prothsearch.net/fermat.html for a comprehensive list of results.

A Sophie Germain prime is a prime p such that 2p+1 is also prime. For instance, 11 is a Sophie Germain prime because 2 · 11 + 1 = 23 is also prime. The first few Sophie Germain primes are 2, 3, 5, 11, 23, 29, 41, 53, 83, 89. It is not currently known if there are infinitely many, though it is thought that there are.**In fact, it is suspected that there are about as many Sophie Germain primes as there are twin primes pairs.

They are named for the 19th century mathematician Sophie Germain, who used them in her work on Fermat's Last Theorem.**Fermat's last theorem states that there are no integer solutions to xn+yn = zn if n > 2. It was one of the most famous problems in math for a few hundred years.

If p is a Sophie Germain prime, then 2p+1 is also prime by definition, and is called a safe prime. Safe primes are important in modern cryptography. See Section 4.1.

It is also interesting to create chains where p, 2p+1, 2(2p+1)+1, etc. are all prime. Such chains are called Cunningham chains. One such chain is 2, 5, 11, 23, 47. It can't be extended any further as 2 · 47 +1 = 95 is not prime. It is thought that there are infinitely many chains of all lengths, but no one knows for sure. According to Wikipedia, the longest chain so far found is 17 numbers long, starting at 2,759,832,934,171,386,593,519.

Goldbach's conjecture is one of the most famous open problems in math. It simply states that any even number greater than two is the sum of two primes. For instance, we can write 4 = 2+2, 6 = 3+3, 8 = 5+3, and 10 = 5+5 or 7+3.

Goldbach's conjecture has been verified numerically by computer searches up through about 1018. The number of ways to write an even number as a sum of two primes seems to increase quite rapidly. For instance, numbers between 2 and 100 have an average of about four ways to be written as sums of primes. This increases to 18 for numbers between 100 and 1000, 93 for numbers between 1000 and 10,000, and 554 for numbers between 10,000 and 100,000. Moreover, in the range from 10,000 to 100,000 no number can be written in less than 92 ways.

Here is a graph showing how the number of possible ways to write a number as a sum of two primes increases with n. The horizontal axis runs from n = 4 to n = 100,000 and the vertical axis runs to about 2000.

Despite the overwhelming numerical evidence, the Goldbach conjecture is still far from being proved. However, there are a number of partial results. For instance, in the early 1970s Chen Jingrun proved that every sufficiently large even number can be written as a sum p+q, where p is prime and q is either prime or a product of two primes.

There is also the weak Goldbach conjecture that states that every odd number greater than 7 is the sum of three primes. In the 1930s, I.M. Vinogradov proved that it was true for all sufficiently large integers. It seems that the weak Goldbach conjecture may have been proved in 2013 by Harald Helfgott, though as of this writing, the proof has not been fully checked. Like, Vinogradov's result, Helfgott proved the result true for all sufficiently large integers, but in this case “sufficiently large” was small enough that everything less than it could be checked by computer.

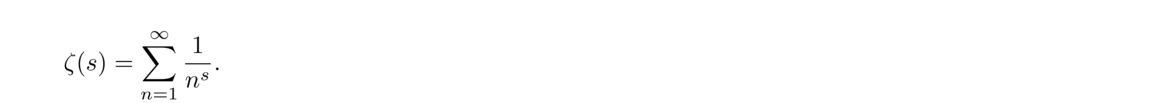

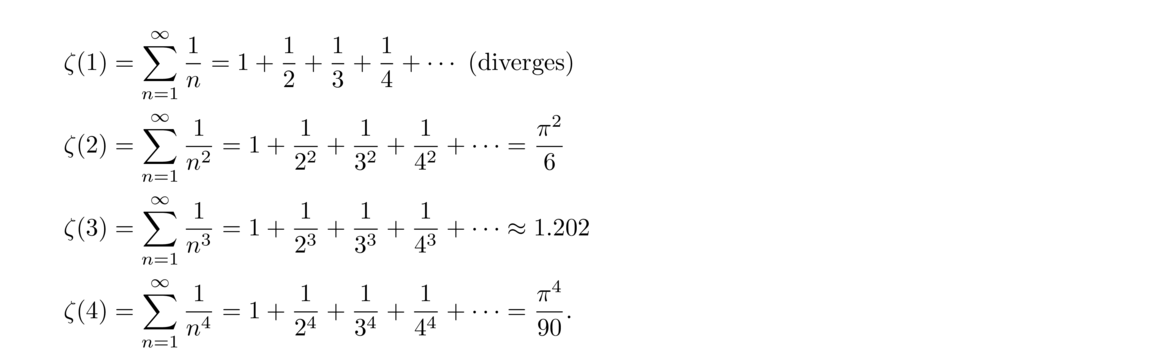

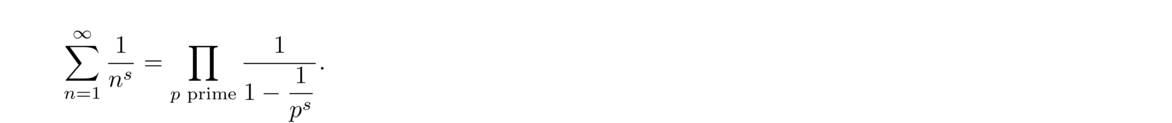

One of the most important functions in analytic number theory is the zeta function,

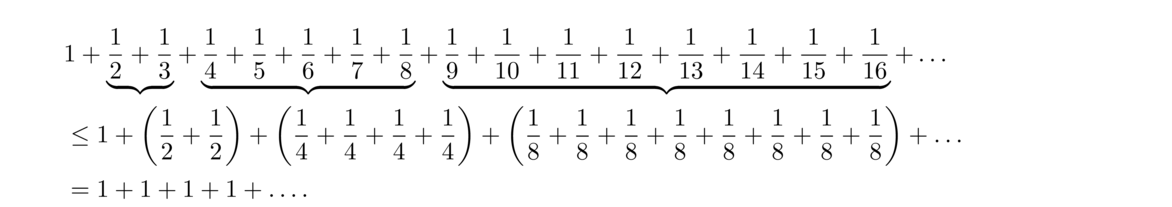

It has been known since at least the 14th century that the harmonic series is divergent. A particularly nice proof of this fact is shown below:

In general, we have that

∑Nn = 1 1n – ln(N) converges to γ ≈ .5772156649, the Euler-Mascheroni constant. This is one of the most famous constants in math, showing up in a number of places in higher mathematics. It is actually not known whether γ is rational or irrational.

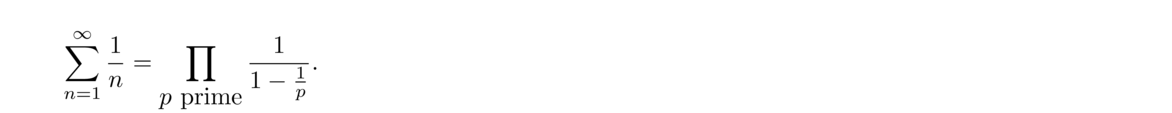

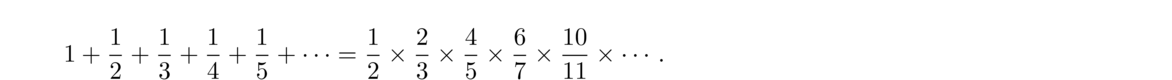

Euler also discovered a beautiful relationship between the harmonic series and prime numbers:

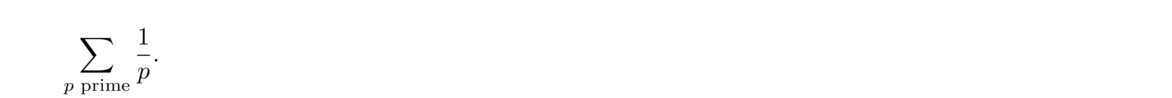

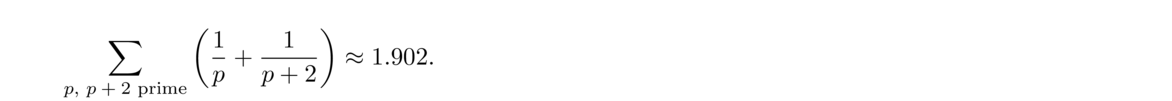

It is interesting to note that a similar sum involving twin primes is actually convergent, namely

Perhaps the most famous unsolved problem in mathematics is the Riemann hypothesis, first stated by Bernhard Riemann in 1859. It is a statement involving the zeta function. A process called analytic continuation is used to find a function defined for most real and complex values that agrees with ζ(n) wherever ζ(n) is defined. This new function is called the Riemann zeta function.

The Riemann zeta function has zeroes at –2, –4, –6, …. These are called its trivial zeros. It has many other complex zeros that have real part 1/2. The line with real part 1/2 is called the critical line. The Riemann hypothesis states that the only nontrivial zeros of the Riemann zeta function lie on the critical line.

It might not be clear at this point what the Riemann hypothesis has to do with primes. Here is the connection, first shown by Euler:

If true, the Riemann hypothesis would imply that the prime numbers are distributed fairly regularly, whereas if it were false, then it would mean that prime numbers are distributed considerably more wildly. There are a number of potential theorems in number theory that start “If the Riemann hypothesis is true, then….” So a solution to the Riemann hypothesis would tell us a lot about primes and other things in number theory.

There have been a variety of different approaches to proving the Riemann hypothesis, though none have thus far been successful. Many mathematicians believe it is likely true, but there are some that are not so sure. Numerical computations have shown that the first 1013 nontrivial zeroes all lie on the critical line.

The Riemann hypothesis is one of the Clay Mathematics Institute's seven Millennium Prize problems, with $1,000,000 offered for its solution.

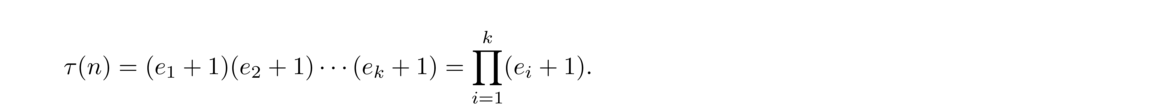

There are a few functions that show up a lot in number theory.

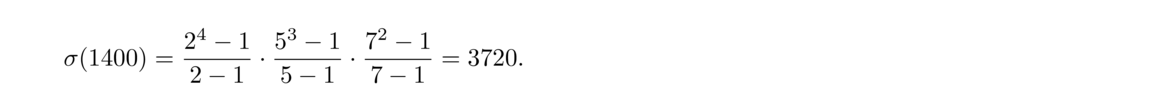

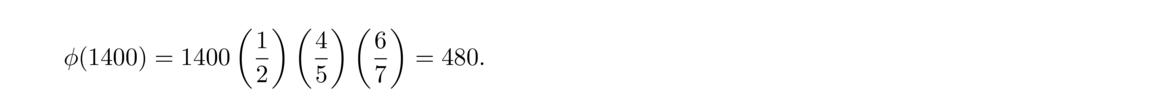

For example, n = 12 has six divisors: 1, 2, 3, 4, 6, and 12. Thus τ(12) = 6 and σ(12) = 1+2+3+4+6+12 = 28. The only positive integers less than 12 that are relatively prime to 12 are 1, 5, 7, and 11, so φ(12) = 4.

As another example, suppose p is prime. Then the divisors of p are just 1 and p, so τ(p) = 2, σ(p) = p+1, and every positive integer less than p is relatively prime to it, so φ(p) = p–1.

The most important of the three functions is φ(n), called the totient function or simply the Euler phi function.

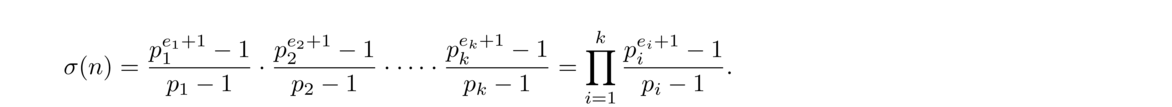

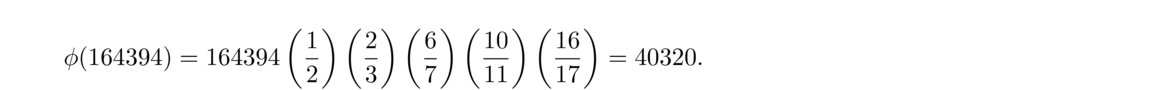

This reasoning works in general. Given the prime factorization n = pe11pe22 ··· pekk, we have

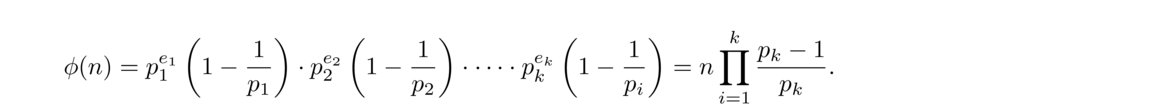

Finding a formula for φ(n) is considerably more involved. First, if p is prime, then φ(p) = p–1 since every positive integer less than p is relatively prime to p.

Next, consider φ(pi), where p is prime. The positive integers less than pi that are not relatively prime to pi are precisely the multiples of p. There are pi–1 such multiples, namely 1, p, 2p, 3p, …, pi–1p. So φ(pi) = pi–pi–1, which we can rewrite as pi(1–1p).

To extend this to the factorization n = pe11pe22 ··· pekk, we will show (in a minute) that φ(mn) = φ(m)φ(n), whenever m and n are relatively prime. Since each of the terms peii are relatively prime, this will give us

We now show that φ(mn) = φ(m)φ(n) whenever gcd(m, n) = 1.

First, consider an example: φ(30) = 8. We have 30 = 5 × 6, φ(5) = 4, and φ(6) = 2. See the figure below. Notice there are 2 columns with 4 integers relatively prime to 30 in each.

As another example, consider φ(33) = 20. We have 33 = 3 × 11, φ(3) = 2, and φ(11) = 10. Notice in the figure below there are 10 columns with 2 integers relatively prime to 33 in each.

This sort of thing happens in general. Suppose we have relatively prime integers m and n, and consider φ(nm).

We claim that there are φ(m) columns that each contain φ(n) integers relatively prime to nm. This tells us that φ(nm) = φ(n)φ(m). The following three steps are enough to prove the claim:

This is true because all the entries in the column are of the form x+mk. Since gcd(x, m) ≠ 1 that means some integer d divides both x and m and hence x+mk as well.

To show this, suppose two entries in the column were congruent modulo n, say x+mk ≡ x+mj (mod n) for some i and j. Then mk ≡ mj (mod n) and since gcd(m, n) = 1, we can cancel to get k ≡ j (mod n), which is to say the entries must have come from the same row. In other words, entries in the column from different rows can't be the same.

To do this, suppose s ≡ t (mod n) and s is relatively prime to n. We want to show that t is relatively prime to n. We can write s–t = nk and sx+ny = 1 for some integers k, x, and y. Solve the former for s and plug it into the latter to get (nk+t)x+ny = 1. Rearrange to get tx+n(kx+y) = 1 from which we get that t and n are relatively prime.

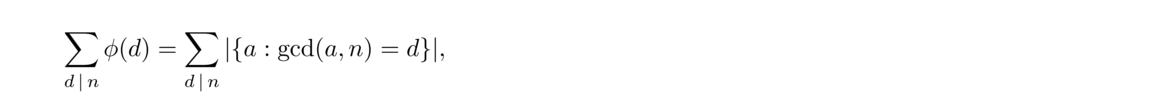

Here is an interesting result about the Euler phi function:

Recall from Section 1.7 that gcd(a, n) = d if and only if gcd(a/d, n/d) = 1. That is, if we divide a and n by their gcd, then the resulting integers have nothing in common (and the converse holds as well). So for instance, if we want to find all the integers a such that gcd(a, 10) = 2, we find all the integers whose gcd with 10/2 is 1; there are φ(5) such integers. And this works in both directions. In general then

A little earlier we showed that φ(mn) = φ(m)φ(n) provided gcd(m, n) = 1. This way of breaking up a function is important in number theory. Here is a definition of the concept:

We have the following:

We already showed φ is multiplicative, and it is easy to show τ and σ are multiplicative using the formulas we have for computing them.

The Möbius function, defined below is important in higher number theory, though we won't use it much in this text.

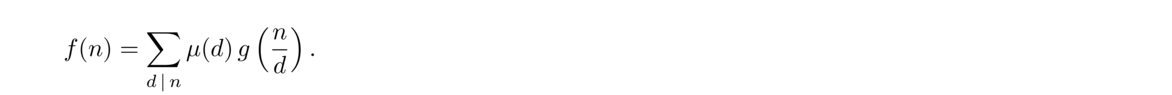

It easy to show μ is multiplicative from its definition. The following fact, called Möbius inversion is an important tool in analytic number theory:

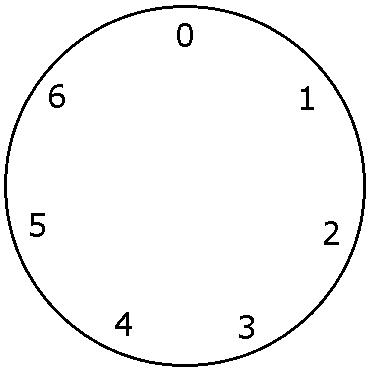

Modular arithmetic is a kind of “wrap around” arithmetic, like arithmetic with time. In 24-hour time, after 23:59, we wrap back around to the start 00:00. After the 7th day of the week (Saturday), we wrap back around to the start (Sunday). After the 365th or 366th day of the year, we wrap back around to the first day of the year. Many things in math and real-life are cyclical and a special kind of math, known as modular arithmetic, is used to model these situations.

Let's look at some examples of arithmetic modulo (mod) 7, where we use the integers 0 through 6. We can think of the integers as arranged on a circle, like below:

We have the following:

Instead of saying something like “8 is the same as 1,” we use the notation 8 ≡ 1 (mod 7). This is read as “8 is congruent to 1 mod 7.” Such an expression is called a congruence. Here is the formal definition:

It is not too hard to show that the two definitions are equivalent. We can use whichever one suits us best for a particular situation. The latter definition is useful in that it gives us an equation to work with, namely a–b = nk for some integer k. For example, 29 ≡ 15 (mod 7) since both 29 and 15 leave the same remainder when divided by 7. Equivalently, 29 ≡ 15 (mod 7) because 29–15 is divisible by 7.

Modular arithmetic is usually defined by noting that ≡ is an equivalence relation. See Section 3.6 for more on this approach. For now we will just approach things informally.

Here are a few examples to get us some practice with congruences:

Solution: Notice that 4! = 4 · 3 · 2 · 1 contains 4 · 3, so it is divisible by 12. Similarly, 5!, 6!, etc. all contain 4 × 3, so they are all divisible by 12 and hence congruent to 0 modulo 12. Thus 1!+2!+3!+… 100! ≡ 1! + 2! + 3! ≡ 9 (mod 12)

For example, suppose we want the last digit of 21000. To find it, we compute 21000 modulo 10. Notice that 25 ≡ 2 (mod 10). Then 225 = (25)5 ≡ 25 ≡ 2 (mod 10). Similarly, 2125 ≡ 2 (mod 10), and 21000 = 2125 · 8 = (2125)8 ≡ 28 (mod 10). Since 28 = 256 ≡ 6 (mod 10), our answer is 6.

Modular arithmetic is like a whole new way of doing math. It's good to have a list of some of the common rules for working with it.

Modular arithmetic is related to the mod operation from Section 1.3. For instance, in arithmetic mod 7 we have 1, 8, 15, 22, … as well as -6, -13, -20, … all corresponding to the same value. Often, but not always, the most convenient value to use to represent all of them is the smallest positive integer value, in this case 1. To find that value for, we use the mod operation from Section 1.3. For instance, to find the smallest positive integer that 67 is congruent to modulo 3, we can compute 67 mod 3 to get 1.

In general, we have the following:

A similar and useful rule is the following:

For instance, if a number n is of the form 3k+1, then n ≡ 1 (mod 3). And conversely, any number congruent to 1 modulo 3 is of the form 3k+1.

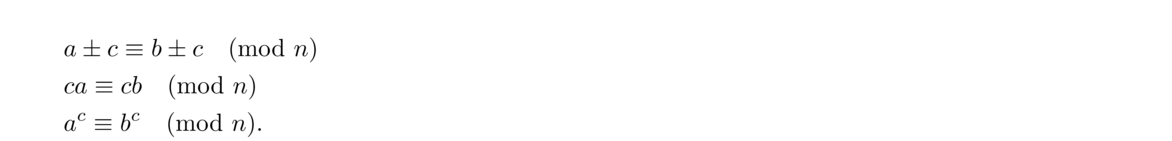

Here are a few rules for working with congruences:

In other words, adding a multiple of the modulus n is the same as adding 0. For instance, 2 + 45 ≡ 2 (mod 9) and 17 + 1000 ≡ 17 (mod 100).

This rule is a special case of the previous rule and is often useful in computations. For instance, –1 ≡ 99 (mod 100). So we can replace 99 in computations mod 100 with -1, which is a lot easier to work with. If we needed to compute 9950 modulo 100, we could replace 99 with -1 and note that (–1)50 is 1, so 9950 ≡ 1 (mod 100).

We have to be careful canceling terms. For example, 2 × 5 ≡ 2 × 11 (mod 12) but 5 ≢ 11 (mod 12). We see that we can't cancel the 2. In general, we have the following theorem:

In the example above, since gcd(2, 12)≠ 1, we can't cancel out the 2. However, using the theorem we can say that 7 ≡ 9 (mod 6). On the other hand, given 2 × 3 ≡ 2 × 10 (mod 7), since gcd(2, 7) = 1, we can cancel out the 2 to get 3 ≡ 10 (mod 7).

Modular arithmetic is useful in that it can break things down into several cases to check. Here are a few examples:

To solve this, we find the possible values of n4 modulo 10. There are 10 cases to check, 04, 14, …, 94, since every integer is congruent to some integer from 0 to 9. We end up with 04 ≡ 0 (mod 10), 54 ≡ 5 (mod 10), 14 ≡ 34 ≡ 74 ≡ 94 ≡ 1 (mod 10), and 24 ≡ 44 ≡ 64 ≡ 84 ≡ 1 (mod 10).

To show this using modular arithmetic, we just consider cases modulo 4. Squaring 0, 1, 2, and 3 modulo 4 gives 0, 1, 0, and 1, so we see that n2 ≡ 0 (mod 4) or n2 ≡ 1 (mod 4), which is the same as saying that n2 is of the form 4k or 4k+1.

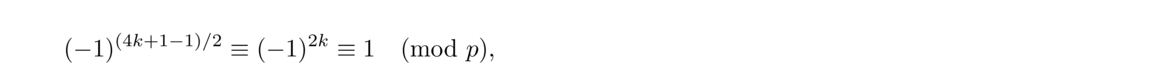

First, note that if p > 3 is prime, then p must be of the form 24k+r, where r = 1, 5, 7, 11, 13, 17, 19, or 23. Any other form is composite (for example 24k+3 is divisible by 3 and 24k+10 is divisible by 2). Thus p ≡ r (mod 24) for one of the above values of r, and it is not hard to check that p2–1 ≡ r2–1 ≡ 0 (mod 24) for each of those values.

In general, if an integer n is of the form ak+b, then we can write the congruence n ≡ b (mod a). The converse holds as well. For instance, all numbers of the form 5k+1 are congruent to 1 modulo 5 and vice-versa.

We have the following useful fact:

This follows from a fact proved in Section 2.1.

We often use this to break up a large mod into smaller mods. For example, suppose we want to show that n5 and n always end in the same digit. That is, we want to show that n5 ≡ n (mod 10). Using the above fact, we can do this by showing n5 ≡ n (mod 2) and n5 ≡ n (mod 5). The first congruence is easily seen to be true. For the second, we check the five cases 05, 15, 25, 35, and 45. A short calculation verifies that the congruence holds for each of them.

The theorem above can be generalized to the following:

If the moduli are not necessarily pairwise relatively prime, we still have

One of the most important parts of working with congruences is using the definition. In particular, we have that x ≡ y (mod n) provided n ∣ (x–y) or equivalently that x–y = nk for some integer k. Here are several examples:

We can turn this into a statement about congruences, namely n3 ≡ n (mod 3). Modulo 3 there are only three cases to check: n = 0, 1, and 2. And we have 03 ≡ 0 (mod 3), 13 ≡ 1 (mod 3), and 23 ≡ 2 (mod 3). Compare this argument to the longer one using the division algorithm in Example 1 of Section 1.2.

We can start by writing the first congruence as an equation: a–b = nk for some integer k. Add and subtract c to the left side to get a–b+(c–c) = nk. Then rearrange terms to get (a+c)–(b+c) = nk. Then rewrite this equation as the congruence a+c ≡ b+c (mod n), which is what we needed to show.

This is a little trickier. The definition tells us we need to show n ∣ (ac–bc). The trick is to factor the left side into (a–b)(ac–1+ac–2b+ac–3b2 + … + abc–2 + bc–1). Since a ≡ b (mod n), we have n ∣ (a–b). Since a–b is a factor of ac–bc, we get n ∣ (ac–bc).

Start with nk = ca–cb for some integer k. Also, since d is gcd(a, b), we have dx = n and dy = c for some integers x and y.

Plugging in, we get dxk = dya – dyb. We can cancel d to get xk = y(a–b). We know gcd(x, y) = 1 as otherwise any common factor of x and y could be included in d to get a larger common divisor of n and c. So we can use Euclid's lemma to conclude that x ∣ a–b. Note that x = n/d, so we have a ≡ b (mod n/d).

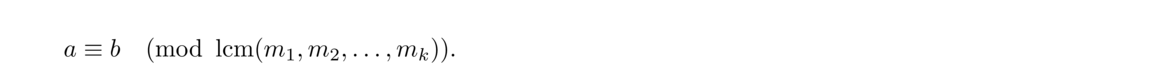

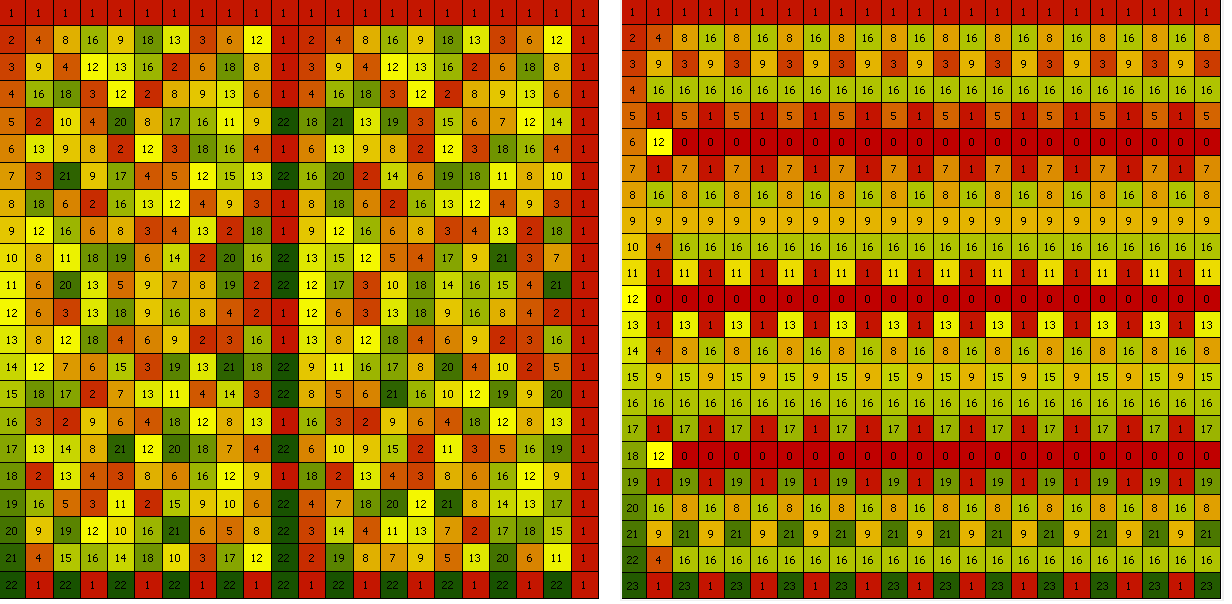

Powers turn out to be interesting in modular arithmetic. For instance, here is a table of powers modulo 7:

There are a number of interesting things here, some of which we will look at in detail later. We see that a6 ≡ 1 (mod 7) for all values of a. We can also see that some of the powers run through all the integers 1 through 6, while others don't. In fact, all the powers cycle with period 1, 2, 3, or 6, all of which are divisors of 6.

Note: To create the table, it is not necessary to compute large powers. For instance, instead of computing 53 = 125 and reducing modulo 7, we can instead write 53 = 52 · 5. Since 52 is 4 modulo 7, we get that 53 = 4 · 5, which is 6 modulo 7.

Below are the tables for modulo 23 and 24. Smaller integers are colored red and larger values are green.**The program that produced these images can be found at http://www.brianheinold.net/mods.html.

Computing numbers to rather large powers turns out to be pretty important in number theory and cryptography. Here are a couple of examples:

We have 6 ≡ –1 (mod 7) so 6100 ≡ (–1)100 ≡ 1 (mod 7).

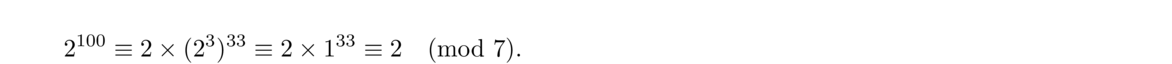

Note that 23 ≡ 1 (mod 7). Further, we can write 100 = 3 × 33 + 1. Thus we have 2100 = 2 × (23)33. Then

In general, if we can spot a power that is simple, like that 61 ≡ –1 (mod 7) or 23 ≡ 1 (mod 7), then we can leverage that to work out large powers. Otherwise, we can use the technique demonstrated below.

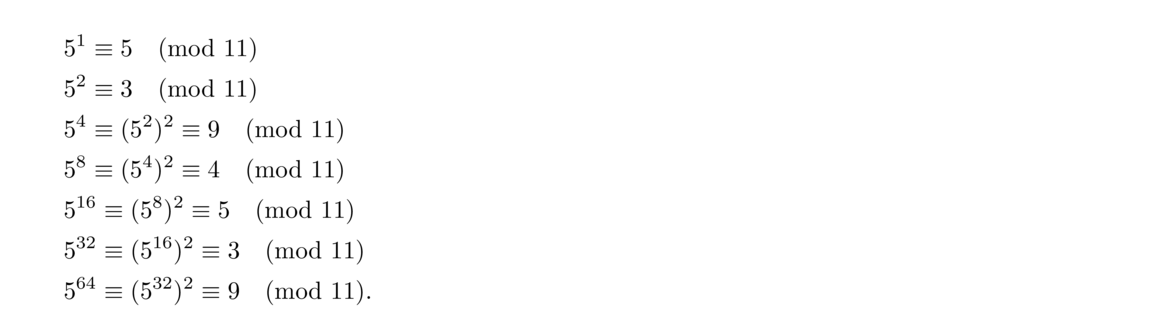

Suppose we want to compute 5100 (mod 11). Compute the following:

This process is called exponentiation by squaring. In general, to compute ab (mod n), we compute a1, a2, a4, a8, etc., up until the exponent is the largest power of 2 less than b. We then write b in terms of those powers and use the rules of exponents to compute ab. Writing b in terms of those powers is the same process as converting b to binary. For instance, 100 in binary is 1100100, which corresponds to 64 · 1 + 32 · 1 + 16 · 0 + 8 · 0 + 4 · 1 + 2 · 0 + 1 · 0, or 64+32+4.

Here is how we might code this algorithm in Python:

Note, however, that this algorithm is already built into Python with the built-in function pow. In particular, pow(a, n, m) will compute an mod m. It can handle quite large powers.

Note that this cannot happen if the modulus is prime. This is because if ab ≡ 0 (mod n), then we have p ∣ ab. By Euclid's lemma, since p is prime, either p ∣ a or p ∣ b, which would imply at least one of a and b is congruent to 0 modulo p.**By the more general version of Euclid's lemma (Theorem 7), if n is relatively prime to both a and b, then we can't have ab ≡ 0 (mod n).

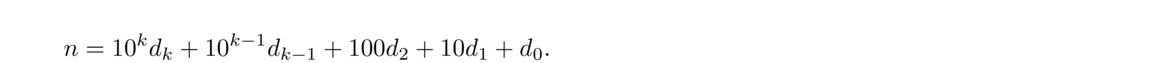

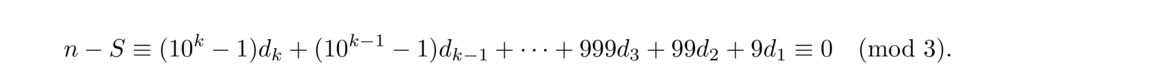

The key here is that when we compute n–S, each of the coefficients is a multiple of 3. It is possible to use this idea to develop tests for divisibility by other integers. For instance, for divisibility by 11, we use S = d0–d1+d2–d3+… ± dk, where the last sign is + or -, depending on whether k is even or odd. When we compute n–S, the coefficients become 11, 99, 1001, 9999, etc., which are all divisible by 11.

As another example, suppose we want a test for whether a four-digit number is divisible by 7. The ideas above can be streamlined into the following procedure:

The numbers in the second row are the closest multiples of 7 less than the powers of 10 directly above.

Our divisibility test for the four-digit number abcd is to check if 6a+2b+3c+d is divisible by 7. Or, since 6 ≡ –1 (mod 7), we can check if –a+2b+3c+d is divisible by 7.

Reduce (b+c–2a+1) modulo 7. A 0 corresponds to Sunday, 1 to Monday, etc.

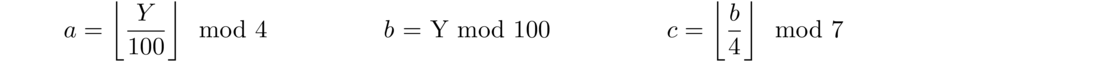

For example, if y = 1977, then a = 19 mod 4 = 3, b = 77, and c = floor(77/4) mod 7 = 5. Then we get b+c–2a+1 ≡ 0 (mod 7), so Christmas was on a Sunday in 1977.

A more general process can be used to find the day of the week of any date in history. See Secrets of Mental Math by Art Benjamin and Michael Shermer. Modular arithmetic can also be used to determine the date of Easter in a given year.

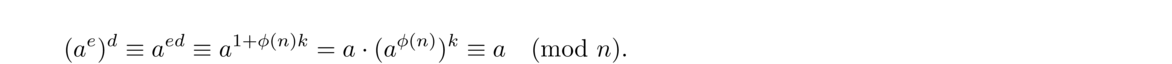

Fermat's Little theorem is a useful rule that is simple to state:

An equivalent way to state the theorem is: If p is prime, then ap ≡ a (mod p) for any integer a.

To get from the this statement to the original, we can divide both sides through by a, which works as long as gcd(p, a) = 1. To get from the original to this statement, just multiply through by a.

We will now prove Fermat's little theorem. To do so, we will need the following lemma:

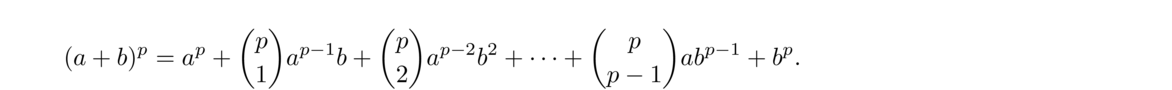

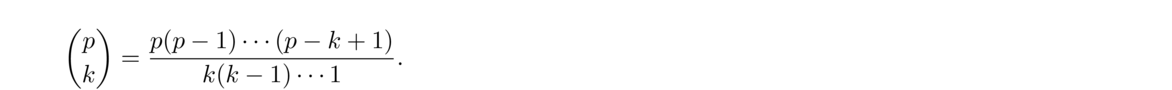

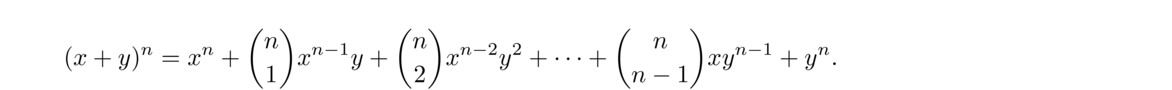

The lemma above is sometimes called the freshman's dream since many freshman calculus and algebra students want to say (a+b)2 = a2+b2, forgetting the 2ab term. You can't forget the 2ab term in ordinary arithmetic, but you can when working mod 2.

We can now prove the alternate statement of Fermat's little theorem (ap ≡ a (mod p)) using the lemma.

Here are some examples of Fermat's little theorem in action:

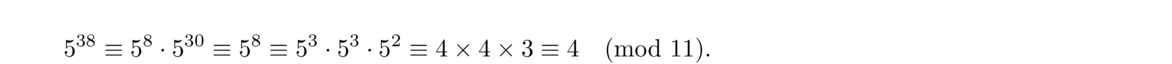

By Fermat's little theorem, 510 ≡ 1 (mod 11). Thus 530 ≡ 1 (mod 11) and so

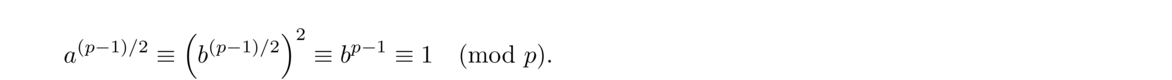

By Fermat's little theorem, we have a · ap–2 ≡ ap–1 ≡ 1 (mod p). From this we see that ap–2 fits the definition of a–1. As an example, the inverse of 3 modulo 7 is 35 ≡ 4 (mod 7).

By Fermat's little theorem, a6 ≡ 1 (mod 7). From this we get that 7 ∣ (a6–1). We can factor a6–1 into (a3–1)(a3+1) and use Euclid's lemma to conclude that 7 ∣ (a3–1) or 7 ∣ (a3+1).

By Fermat's little theorem, we have pq–1 ≡ 1 (mod q). Also, qp–1 ≡ 0 (mod q). Adding these gives pq–1+qp–1 ≡ 1 (mod q). A similar argument shows pq–1+qp–1 ≡ 1 (mod p). Since the same congruence holds modulo p and modulo q (and gcd(p, q) = 1), it holds modulo pq.

These numbers are of the form 1+10+102+103+…+10k, which can be rewritten as 10k+1–19 using the geometric series formula. By Fermat's little theorem 10p–1 ≡ 1 (mod p) for any prime p such that gcd(p, 10) = 1 (i.e. for any prime besides 2 and 5). Thus also 102(p–1), 103(p–1), etc. are congruent to 1 modulo p. Thus 10k+1–19 will be congruent to 0 modulo p for infinitely many values of k.

For example, the integers 111111 (6 ones), 111111111111 (12 ones), 111111111111111111 (18 ones) etc. are all divisible by 7 since 106 ≡ 1 (mod 7). As another example, numbers that consist of 16, 32, 48, etc. ones are all divisible by 17 since 1016 ≡ 1 (mod 17).

Euler's theorem is a generalization of Fermat's little theorem to nonprimes.

Recall that φ(n) is the Euler phi function from Section 2.15. Since φ(p) = p–1 for any prime p, we see that Euler's theorem reduces to Fermat's little theorem when n = p is prime.

As an example of Euler's theorem, 34 ≡ 1 (mod 10) since gcd(3, 10) = 1 and φ(10) = 4.

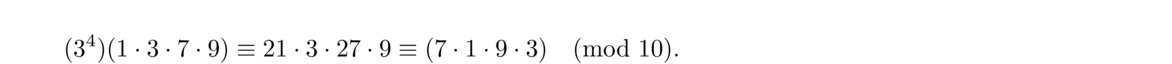

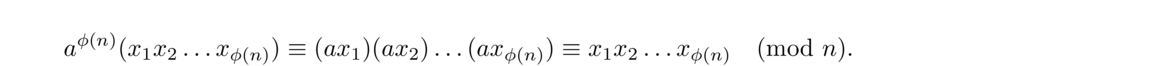

To understand why this works, recall that 1, 3, 7, and 9 are the φ(10) = 4 integers relatively prime to 10. Multiply each of these by a = 3 to get 3, 9, 21, and 27, which reduce modulo 10 to 3, 9, 1, and 7, respectively. We see these are a rearrangement of the originals. So

The axi are distinct because if axi ≡ axj (mod n), then since gcd(a, n), we can cancel a to get xi ≡ xj (mod n).

We have axi is relatively prime to n because if some prime p divides axi, then by Euclid's lemma, p ∣ a or p ∣ xi, and as gcd(a, n) = gcd(xi, n) = 1, p cannot divide n. Thus axi and n cannot have any prime factors (and hence any factors besides 1) in common.

Thus we have

The notation ℤn refers to the set {0, 1, 2, …, n–1} with all arithmetic done modulo n.**In many texts the notation ℤ/nℤ is used. More formally, the way ℤn is defined usually goes as follows:

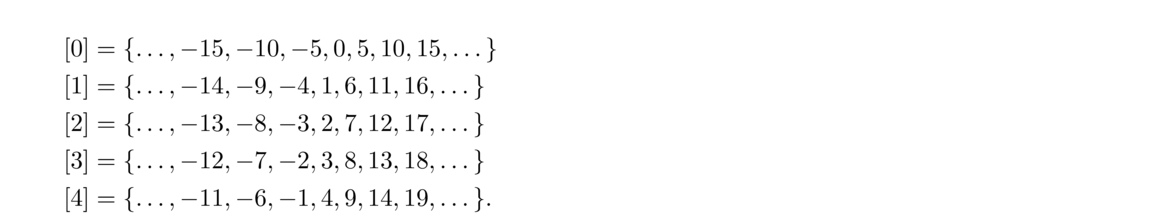

The relation ≡ is an equivalence relation (it is reflexive, symmetric, and transitive). As such, it partitions ℤ into disjoint sets called equivalence classes, where every integer in a given set is congruent to everything else in that set and nothing else.

For instance, here are the sets we get modulo 5:

The formal definition is used to make sure everything is on a firm mathematical footing. There is more to show to make sure that everything works out mathematically, but we will skip that here and just think of ℤn as the set {0, 1, …, n–1} with arithmetic done modulo n.

Some integers have an inverse in ℤn. That is, for some integers a, there exists an integer a–1 such that aa–1 ≡ 1 (mod n). For instance, in ℤ7, 3 · 5 ≡ 1 (mod 7), so we can say that the inverse of 3 is 5 (and also that the inverse of 5 is 3). Here is a useful fact about inverses.

To see that the inverse is unique, suppose ax ≡ 1 (mod p) and ay ≡ 1 (mod p). Then ax ≡ ay (mod p) and since gcd(a, p) = 1, we can cancel a to get x ≡ y (mod p).

The particular case of interest is ℤp where p is prime:

For instance, in ℤ7, we have 1–1 = 1, 2–1 = 4, 3–1 = 5, 4–1 = 2, 5–1 = 3, and 6–1 = 6.

Notice that 1 and 6 (which is -1 mod 7) are their own inverses. We have the following:

Now assume n = p is prime. If a is its own inverse, then a · a ≡ 1 (mod p). From this we get that p ∣ (a2–1). We can factor a2–1 into (a–1)(a+1). By Euclid's lemma, p ∣ a–1 or p ∣ a+1, which tells us that a ≡ 1 (mod p) or a ≡ –1 (mod p).

Modulo a composite, there can be integers besides ± 1 that are their own inverses. For instance, 5 · 5 ≡ 1 (mod 8), so 5 is its own inverse mod 8.

Wilson's theorem is a nice theorem that it gives a simple characterization of prime numbers in terms of modular arithmetic.

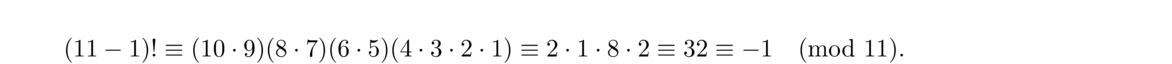

This gives us a way to check if a number is prime: just compute (p–1)! modulo p. Its fatal flaw is that (p–1)! is huge and difficult to compute even for relatively small values of p. So Wilson's theorem is not a practical primality test, unless someone were to find an easy way to compute factorials modulo a prime. Still, here is an example with p = 11:

The proof of Wilson's theorem is interesting. Let's look at some examples to help understand it.

Take p = 14, a composite. We have (14–1)! ≡ 0 (mod 14) since 13! contains 2 · 7 = 14. This will work in general for a composite number—factor it and find its factors in (p–1)!. We have to be a little careful if p is the square of a prime, though. But as long as p > 4, we can still find its factors in (p–1)!. For instance, if p = 25, then (25–1)! ≡ 0 (mod 25) since 24! is divisible by both 5 and 10 (which contains a factor of 5), so we do get 25 ∣ 24!.

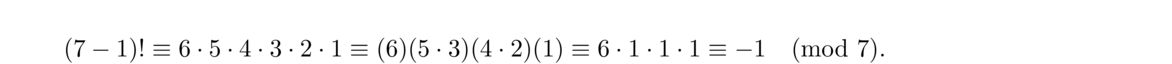

Now take p = 7, a prime. In ℤ7, 2 and 4 are inverses of each other, as are 3 and 5. Then we have

Here is a formal write-up of the proof:

If p is not prime, then (p–1)! ≡ 0 (mod p) since p ∣ (p–1)!. We have this because we can write p = ab for some positive integers a and b less than p (since p is not prime) and those integers both show up in (p–1)!, except possibly if the square of a prime, specifically p = q2. But in that case as long as p > 4, we know that both q and 2q will show up in (p–1)!.

On the other hand, suppose p is prime. By Theorems 25 and 27, if p is prime, then in ℤp each integer 1, 2, … , p–1 has a unique inverse, with 1 and p–1 being the only integers that are their own inverses. This means that all the other integers come in inverse pairs. Thus, looking at (p–1)! = (p–1)(p–2)… 3 · 2 · 1, we know that all the terms in the middle, p–2, p–3, …, 3, 2 pair off into inverse pairs and cancel each other out, leaving us with (p–1)! ≡ p–1 ≡ –1 (mod p).

Even though Wilson's theorem is not practical for checking primality, it is a useful tool in proofs. There is also an interesting twin-prime analogue of Wilson's Theorem proved by P. Clement in 1949: (p, p+2) are a twin-prime pair if and only if 4(p+1)! ≡ –(p+4) (mod p(p+2)).

For example, suppose we want to solve 2x ≡ 5 (mod 11). To solve the ordinary equation 2x = 5, we would divide by 2 to get x = 2.5, but in modular arithmetic, we don't quite have division, so we have to find other approaches. A simple approach is trial and error, as there are only 11 values of x to try. The solution ends up being x = 8. We will see some faster approaches shortly.

The algebraic equation ax = b always has a single solution (unless a = 0), but with ax ≡ b (mod n), it often happens that there is no solution or multiple solutions. For example, 2x ≡ 5 (mod 10) has no solution, while 2x ≡ 4 (mod 10) has two solutions modulo 10, namely x = 2 and x = 7.

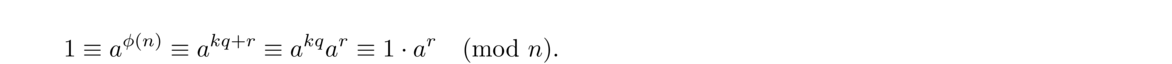

Let d = gcd(a, n).

The idea for why this works is we can write ax ≡ b (mod n) as ax–nk = b for some integer k, Thus, solving the congruence is the same as finding integer solutions to the equation ax–nk = b. We know from Theorem 3 that such an equation only has a solution provided d = gcd(a, n) divides b. Further, the extended Euclidean algorithm can be used to find x and k in the equation.

To see where the formula for all the solutions comes from, note that we can divide ax ≡ b (mod n) through by d to get ad x ≡ bd (mod nd). By a similar argument to the one used to prove Theorem 25 (about the existence of inverses), this equation must have a unique solution: x ≡ x0 (mod nd). So x0 will also be a solution modulo n, as will x0+nd, x0+2nd, x0+3nd, …, x0+(d–1)nd, which are all less than n and congruent to x0 modulo nd. This gets us d different solutions. It is not hard to show that these are the only solutions.

Below are a few different ways to find an initial solution x0.

One problem with this is that finding a–1 itself takes some work. However, if you have a lot of equations of the form ax ≡ n (mod b), where a is fixed, but n varies, then it makes sense to use this method, since all the work goes into finding a–1 and once we have it, it is short work to solve all those equations.

One way to find a–1 is to note that if p is prime and gcd(a, p) = 1, then by Fermat's little theorem, a–1 ≡ ap–2 (mod p). In general, by Euler's theorem, a–1 ≡ aφ(n)–1 (mod n) as long as gcd(a, n) = 1.

Solution: Since gcd(3, 36) = 3 and 3 ∤ 11, there is no solution.

Solution: Since gcd(14, 37) = 1, there will be exactly 1 solution.

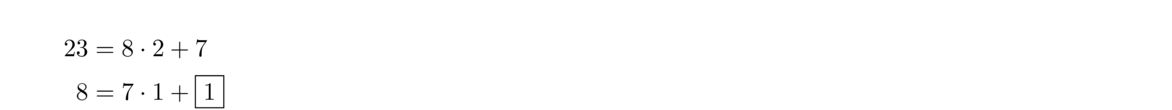

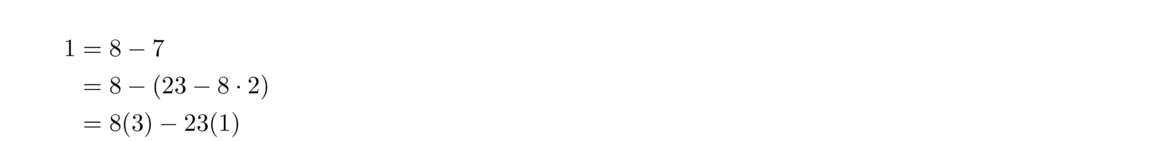

Using the Euclidean algorithm on 14 and 37, we get

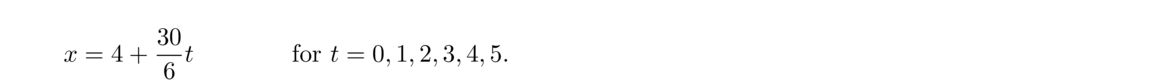

Solution: Since gcd(12, 30) = 6 and 6 ∣ 18, there 6 different solutions mod 30. We can divide the whole equation through by gcd(12, 30) = 6 to get 2x ≡ 3 (mod 5). By trial and error, we get x0 = 4 is a solution. Then the solutions of the original are

Diophantine equations are algebraic problems where we are looking for integer solutions. They are among some of the trickiest problems in mathematics. For instance, Fermat's Last Theorem, which took 400 years and some seriously high-powered math to solve, is about showing that xn+yn = zn has no integer solutions if n > 2. However, linear Diophantine equations of the form ax+by = c can be easily solved since they closely are related to the congruence ax ≡ c (mod b).

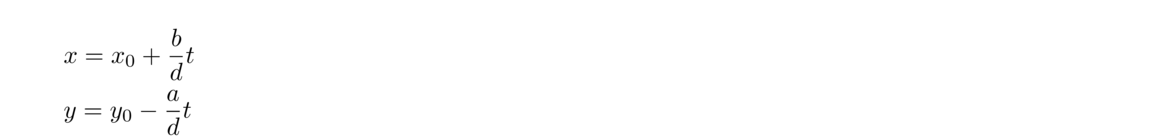

Using the formula for the solutions to that congruence gives us the following formula for solutions to ax+by = c:

Here are a few example problems:

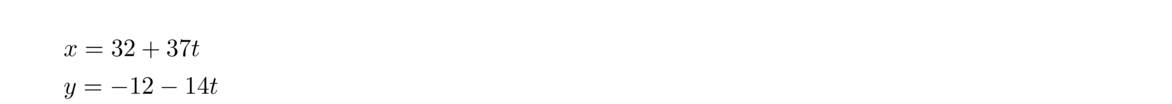

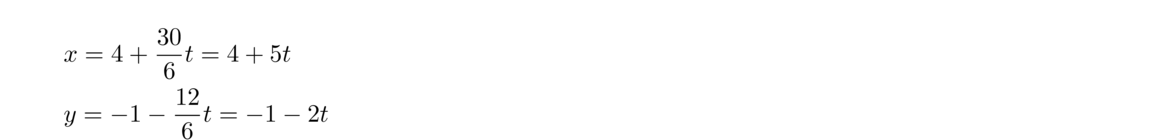

Solution: Notice that this is equivalent to the congruence 37x ≡ 11 (mod 14), which we did earlier. In that example, we found 14(32) + 37(–12) = 4. From this, we get x0 = 32 and y0 = –12. From here, all the solutions are of the form

Solution: This equation is also equivalent to a congruence we solved earlier. In that example, we got x0 = 4 and from 12x0+30y0 = 18, we get y0 = –1. Then all solutions are of the form

This reduces to the equation 69x+75y = 1209.

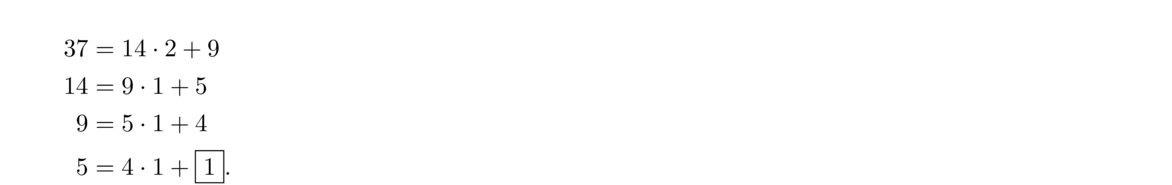

The extended Euclidean algorithm gives 69(12)+75(–11) = 3 (we'll skip the details here; a quick way to get this would be to use the program of Section 1.6).

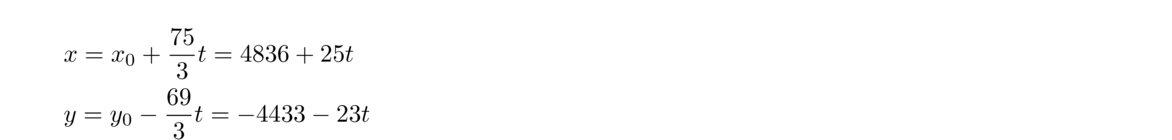

Multiply through by 403 to get 69(4836)+75(–4433) = 1209. All the solutions are of the form

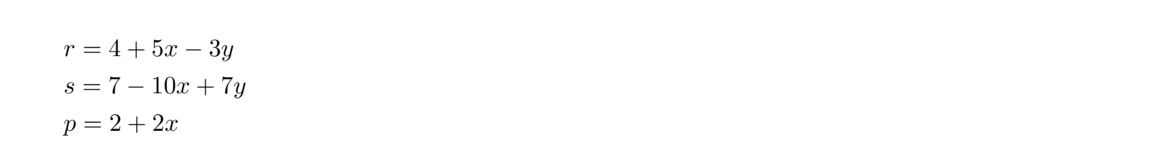

Letting r, s, and p denote the values of rubies, sapphires, and pearls, we have 5r+8s+7p+92 = 19r + 14s + 2p + 4, which becomes 14r+6s–5p = 88. This has three variables, as opposed to our previous examples which all have two.

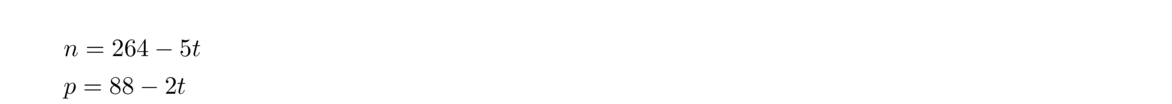

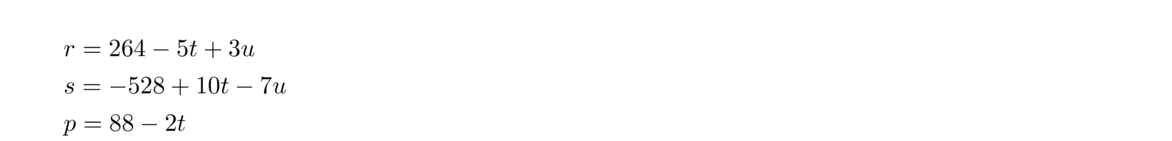

To handle this we start with the first two terms, 14r+6s. We have gcd(14, 6) = 2 and we can write 14(1)+6(–2) = 2. Thus, the number of rubies and sapphires is always a multiple of 2, say 2n for some integer n. Then consider 2n–5p = 88. We have gcd(2, 5) = 1 and we can write 2(3)–5(1) = 1. Multiply through by 88 to get 2(264)–5(88) = 88. Thus all solutions of 2n–5p = 88 can be written in the form

The example above is an equation of the form ax+by+cz = d. The procedure used in that example can be streamlined and used in general:

In general a1x1 + a2x2 + … + anxn = b has a solution provided gcd(a1, a2, …, an) ∣ b. The equation can be solved by an iterative process like above.

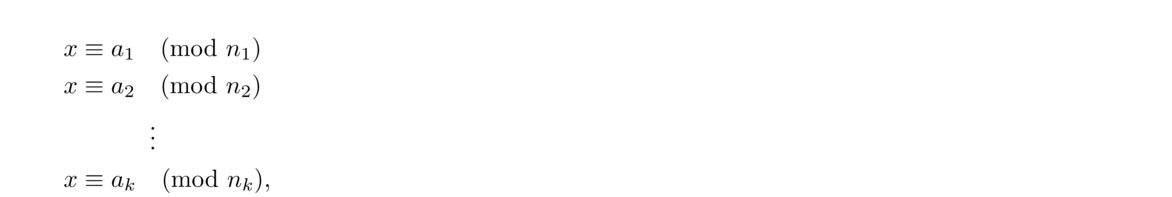

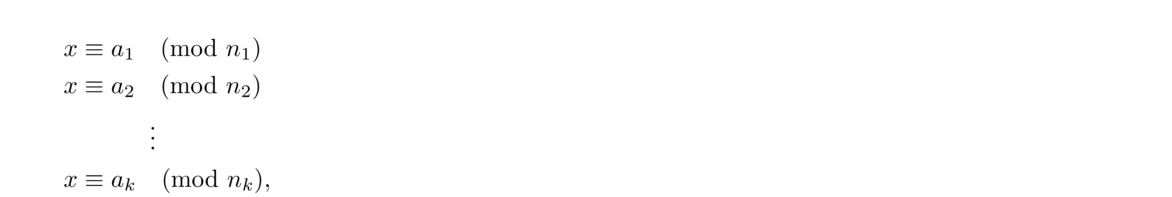

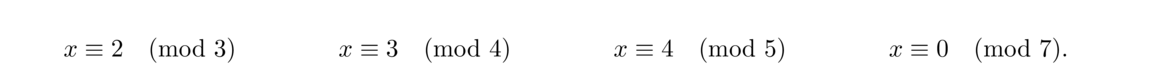

Just like we can solve systems of algebraic equations, we can solve systems of congruences. The technique used is called the Chinese remainder theorem. The name comes from its appearance in a third century Chinese manuscript.

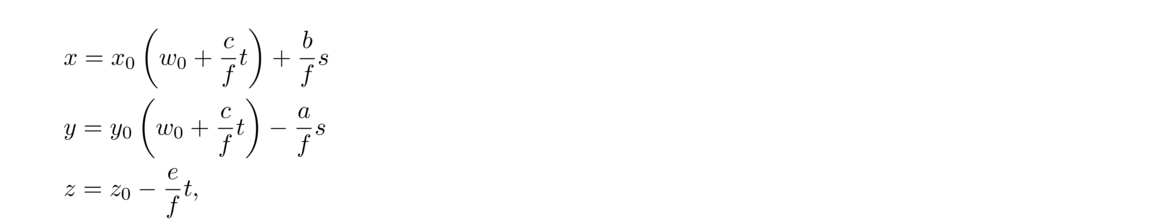

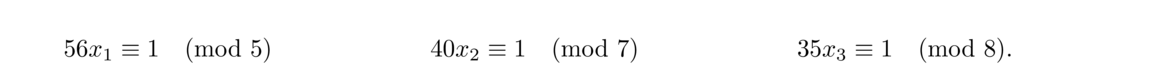

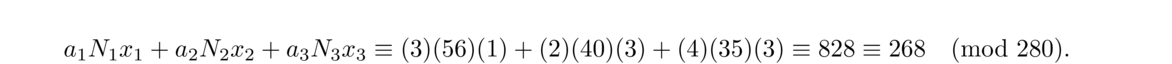

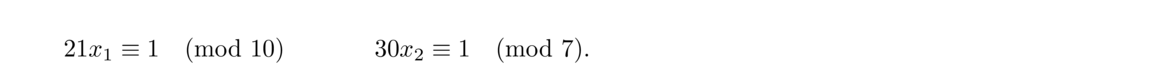

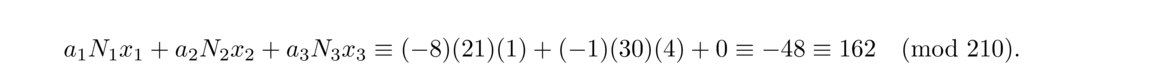

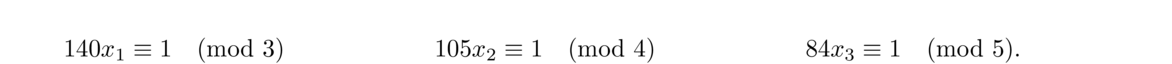

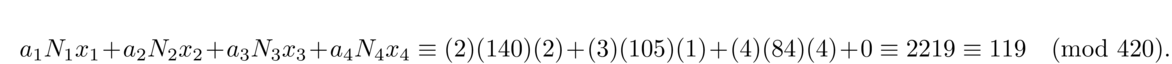

To solve such a system, let N = n1n2 ··· nk. Then for each i = 1, 2, …, k, let Ni = N/ni (the product of all the moduli except ni), and solve the congruence Nixi ≡ 1 (mod ni). The solution to the system is given by a1N1x1 + a2N2x2+… + akNkxk, which is unique modulo N.

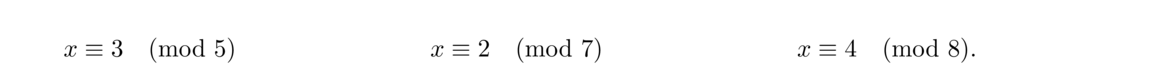

We can describe this problem with a system of three congruences:

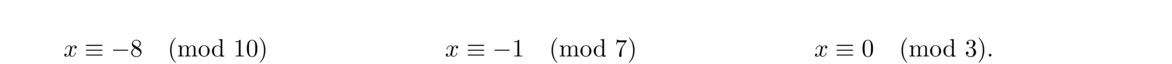

The Chinese remainder theorem can't be used if the moduli are not relatively prime, but there are things that can be done:

The problem is that the moduli 4 and 6 share a factor of 2. We can set c1 = m1, c2 = m2, and remove a factor of 2 from m3 = 6 to get c3 = 3. This doesn't change the lcm, as lcm(4, 5, 6) = 60 and lcm(4, 5, 3) = 60. We then solve the reduced system

One way to think about this is if a number is of the form 4k+1 and 6k+3, then it is of the form 12k+9.

It is not too hard to generalize the procedure above to solve x ≡ a1 (mod n1) and x ≡ a2 (mod n2). What we do is set d = gcd(n1, n2), solve m1dk ≡ a2–a1d (mod m2)d for k, and then the solution to both congruences is x ≡ a1+m1k (mod lcm(a1, a2)). Note that this works provide d ∣ (a2–a1).

In general, some combination of these techniques can be used for tricky problems. In fact, we have the following theorem:

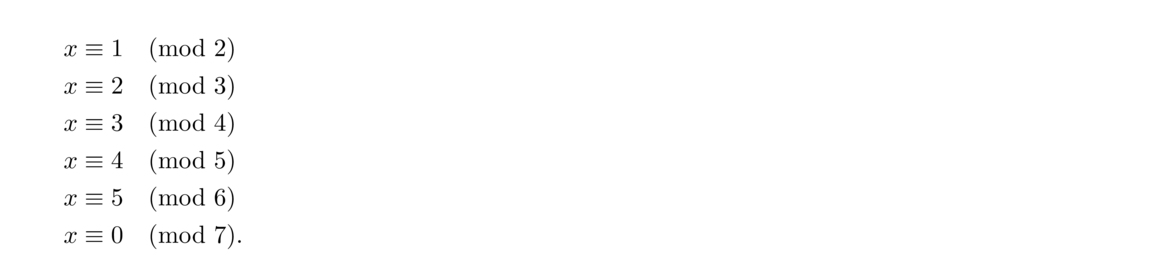

A classic Chinese remainder theorem problem is the following: There are some eggs in a basket. When they are removed in pairs, there is one left over. When three at a time are removed, there are two left over. When four, five, six, or seven at a time are removed, there remain three, four, five, or zero respectively. How many eggs are in the basket?

This corresponds to the following system:

Then the remaining moduli 3, 4, 5, 6, 7 can be reduced to 3, 4, 5, 6, 7 using the first trick given above. This turns x ≡ 5 (mod 6) into x ≡ 5 (mod 3), which is the same as x ≡ 2 (mod 3), which we already have, so we can drop it. We are thus left with the following:

All of the congruences we considered above are of the form x ≡ a (mod m). It is possible to have more general cases, where we have cx ≡ a (mod m). To handle these, we first have to solve them for x.

The Chinese remainder theorem has a number of applications. As seen above, it is useful any time we need to know when several cyclical events will line up. The Chinese remainder theorem is also an important part of modern cryptography, and it shows up here and there in higher math.

One important use of the Chinese remainder theorem is breaking up composite moduli into smaller pieces that are easier to work with. For instance, if we need to solve a congruence f(x) ≡ a (mod mn) with gcd(m, n) = 1, we can solve the congruences f(x) ≡ a (mod m) and f(x) ≡ a (mod n) and combine them by the Chinese remainder theorem to get a solution modulo mn. Note the similarity between this and the fact mentioned on in Section 3.1.

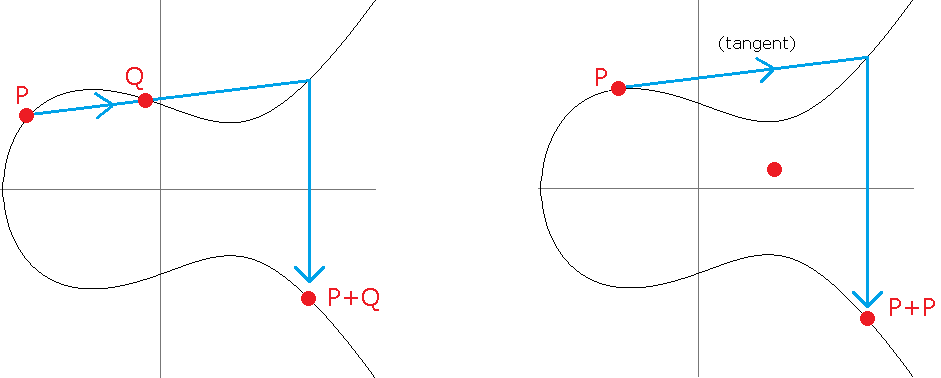

It is interesting to look at powers modulo an integer. For example, if we look at the powers of 2 modulo 9, we get the repeating sequence 2, 4, 8, 7, 5, 1, 2, 4, 8, 7, 5, 1, …. If we look at the powers of 7 modulo 9, we get the repeating sequence 7, 4, 1, 7, 4, 1, …. The powers of 8 give the repeating sequence 8, 1, 8, 1, …. The goal of this section is to understand a little about these repeating sequences.

In particular, we are interested in the first power that turns out to be 1. This power is called the order. We have the following definition:

For instance, the order of 7 modulo 9 is 3, since 73 ≡ 1 (mod 9) and no smaller positive power of 7 (71 or 72) is congruent to 1.

If a is not relatively prime to n, the order is undefined, as no power of a beside a0 can ever be 1. So we will only concern ourselves with values of a that are relatively prime to n.

Euler's theorem tells us that aφ(n) ≡ 1 (mod n), so the order must always be no greater than φ(n). But there is an even closer connection between the order and φ(n), namely that the order must always be a divisor of φ(n).

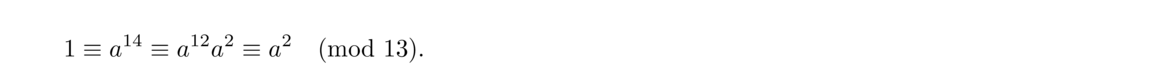

For example, take n = 13. We have φ(13) = 12. Suppose some integer a had an order that is not a divisor of 12, say order 7. Then we would have a7 ≡ 1 (mod 13) and a14 ≡ 1 (mod 13). But since a12 ≡ 1 (mod 13) (by Euler's theorem), we would have

This theorem is a special case of Lagrange's Theorem, an important result in group theory.

Below is a table of orders of integers modulo 13. We have φ(13) = 12 and the possible orders are the divisors of 12, namely 1, 2, 3, 4, 6, and 12.

Of particular interest are 2, 6, 7, and 11, which have order 12, the highest possible order. These values are called primitive roots. In general, we have the following:

Not every integer has primitive roots. For instance, 8 doesn't have any. We have φ(8) = 4, but 1, 3, 5, and 7 all have order 1 or 2. We have the following theorem:

We won't prove this theorem here as it is a bit involved. However, you can find a complete development of the theorem in many number theory texts.

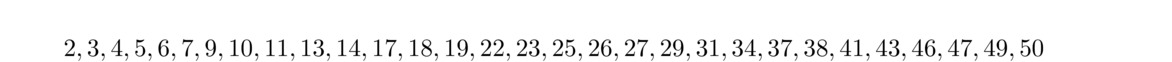

The integers between 2 and 50 that have primitive roots are shown below:

The powers of a primitive root a of n are all unique and run through all of the integers relatively prime to n. For instance, consider a = 2 and n = 13. The powers of 2 are 2, 4, 8, 3, 6, 12, 11, 9, 5, 10, 7, 1. We see that they run through all the integers relatively prime to 13.**Using the terminology of abstract algebra, we can say a is a generator of the multiplicative group of integers relatively prime to n since every integer is a power of a. The group is thus cyclic provided n has a primitive root.

Phrased another way, if a is a primitive root of n, then every integer relatively prime to n is of the form ak for some k. This gives us a way to determine the orders of other elements modulo n.

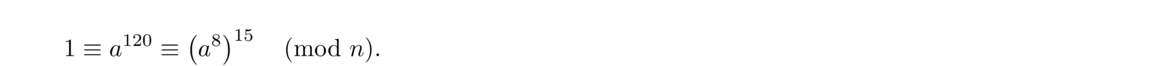

As an example, consider an integer n with φ(n) = 30 and suppose we want the order of some integer b that turns out to equal a8. Since a is a primitive root, its order must be 30. That is a30 ≡ 1 (mod n), and for that matter a60, a90, a120, etc. are all congruent to 1 modulo n as well. We are looking for the order of b, so we want to find a power of a8 that is congruent to 1. So we go through a16, a24, a32, etc. until we get to one that matches up with one of the powers 30, 60, 90, etc.

In other words, we are looking for when multiples of 8 match up with multiples of 30. Thus we just need to find lcm(8, 30), which is 120. Then

The theorem above actually allows us to determine how many primitive roots there are for a given integer n:

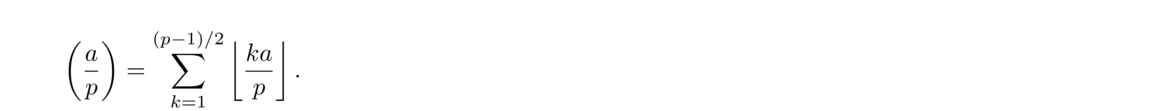

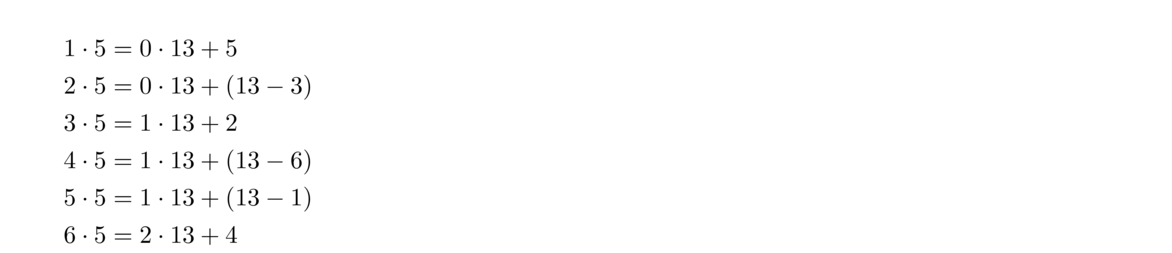

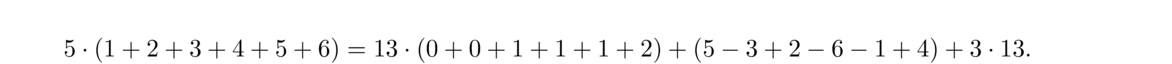

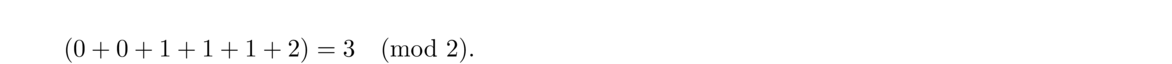

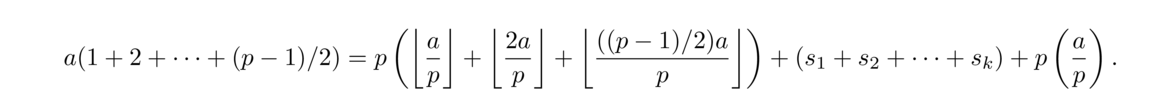

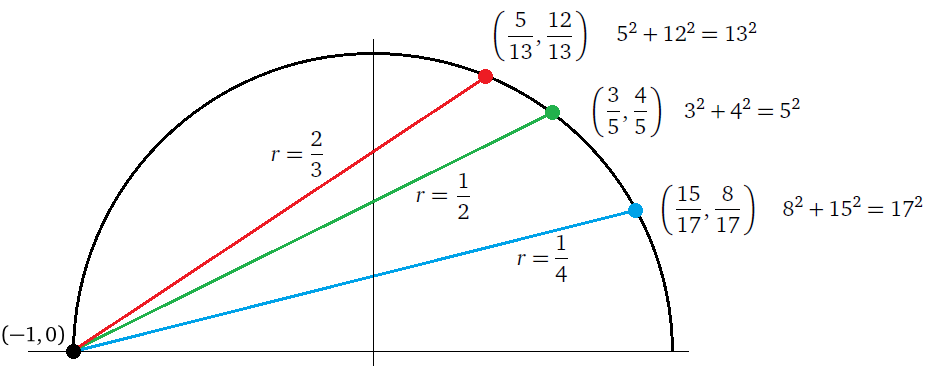

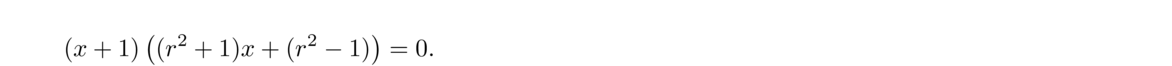

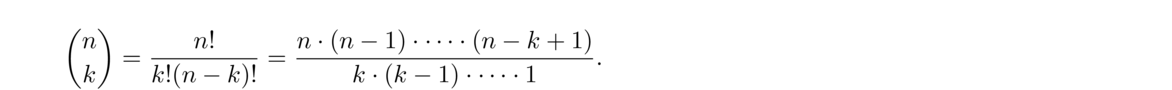

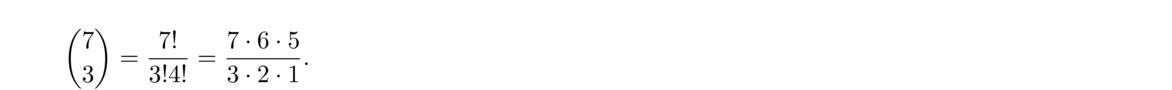

We can actually say something a little more general: