An Intuitive Introduction to Data Structures

© 2015 Brian Heinold

Licensed under a Creative Commons Attribution-Noncommercial-Share Alike 3.0 Unported License

Here is a pdf version of the book.

This book is about data structures. Data structures are ways of storing and organizing information. For example, lists are a simple data structure for storing a collection of data. Some data structures make it easy to store and maintain data in a sorted order, while others make it possible to keep track of connections between various items in a collection. Choosing the right data structure for a problem can make the difference between solving and not solving the problem. The book covers the internals of how they work and covers how to use the ones built into Java.

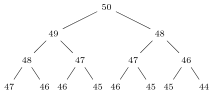

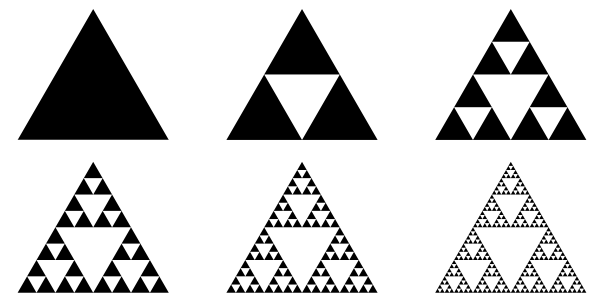

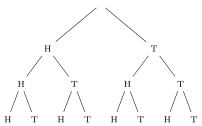

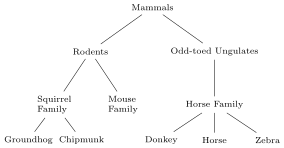

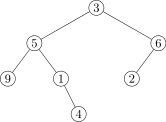

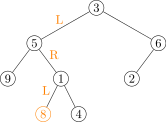

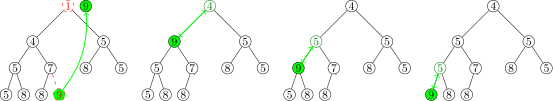

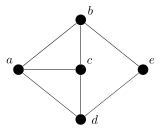

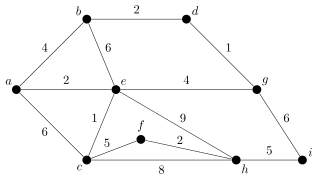

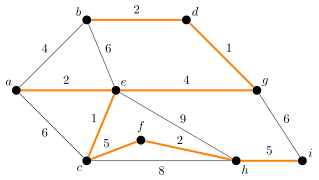

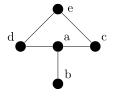

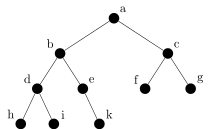

Chapter 1 covers the analysis of running times, which is important because it allows us to estimate how quickly algorithms will run. Chapter 2 covers two approaches to lists, namely dynamic arrays and linked lists. Chapter 3 covers stacks and queues, which are simple and important data structures. Chapter 4 covers recursion, which is not a data structure, but rather an important approach to solving problems. Chapter 5 is about binary trees, which are used to store hierarchical data. Chapter 6 is about two particular types of binary trees, heaps and binary search trees, which are used for storing data in sorted order. Chapter 7 covers hashing and two important data structures implemented by hashing—sets and maps. Chapter 8 covers graphs, which are a generalization of trees and are particularly important for modeling real-world objects. Chapter 9 covers sorting algorithms. Chapters 1 through 5 form the basis for the other chapters and should be done first and probably in the order given. The other chapters can be done in any order.

This book is based on the Data Structures and Algorithms classes I've taught. I used a bunch of data structures and introductory programming books as references the first time I taught the course, and while I liked parts of all the books, none of them approached things quite in the way I wanted, so I decided to write my own book.

I've tried to keep explanations short and to the point. A guiding theme of this book is that the best way to understand a data structure or algorithm is to implement it. Generally, for each data structure and algorithm I provide a succinct explanation of how it works, followed by an implementation and discussion of the issues that arise in implementing it. After that, there is some discussion of efficiency and applications. The implementations are designed with simplicity and understanding in mind. They are not designed to be used in the real world, as any real-world implementation would have tons of practical issues to consider, which would clutter things up too much. In addition, my approach to topics is a lot more intuitive than it is formal. If you are looking for a formal approach, there are many books out there that take that approach.

Please send comments, corrections, and suggestions to heinold@msmary.edu.

Running times of algorithms

Consider the problem of finding all the words that can be made from the letters of a given word. For instance, from the main word computer, one can make put, mop, rope, and compute, and many others. An algorithm to do this would be useful for programming a Scrabble computer player or just for cheating at word games.

One approach would be to systematically generate all combinations of letters from the main word and check each to see if each is a real word. For instance, from computer, we would start by generating co, cm, cp, etc., going all the way to computer, looking for each in a word list to see which are real words.

A different approach would be to loop through the words in the word list and check if each can be made from the letters of the main word. So we would start with aardvark, abaci, aback, etc., checking each to see if it comes from the main word.

Which one is better? We could go with whatever approach is easier to program. Sometimes, this is a good idea, but not here. There is a huge difference in performance between the first and second algorithms. The first approach chokes on large main words. On my machine, it is very slow on 10-letter main words and impossible to use on 20-letter words. On the other hand, the second approach runs in a few tenths of a second for any nearly word size.

The reason that the first approach is slow is that the number of ways to arrange the letters explodes as the number of letters increases. For example, there are 24 ways to rearrange the letters of bear, 40,320 ways to rearrange the letters of computer and 119,750,400 ways to rearrange the letters of encyclopedia. For a 20-letter word, if all the letters are different, there are 20! (roughly 2.5 quintillion) different rearrangements. And this isn't all, as these are only rearrangements of all the letters, and we also have to consider all the two-letter, three-letter, etc. combinations of the letters. And for each and every combination of letters, we have to check if it is in the dictionary.

On the other hand, with the second approach we scan through the dictionary just once. For each word, we check to see if it can be made from the letters of the main word. And the key here is that this can be done very quickly. One way is to count the number of occurrences of each letter in the word and compare it to the number of occurrences of that letter in the main word. Even for large words, this can be done in a split second. A typical dictionary would have maybe 100,000 words, so looping through the dictionary performing a very quick operation for each word adds up to a running time on the order of a fraction of a second up to maybe a second or so, depending on your system. The algorithm will have nearly same running time for a main word like bear as for a main word like encyclopedia.

This is why it is important to be able to analyze algorithms. We need to be able to tell which algorithms will run in a reasonable amount of time and which ones will take too long.

Analyzing algorithms

One important way to analyze an algorithm is to pick out the most important parameter(s) affecting the running time of the algorithm and find a function that describes how the running time varies with the parameter(s). This section has two examples.

Comparison of two algorithms to find the mode of an array

Consider the problem of finding the most common element (the mode) in an array of integers. The parameter of interest here is the size of the array. We are interested in how our algorithm will perform as the array size is increased. Here is one algorithm:

public static int mode(int[] a)

{

int maxCount=0, mode=0;

for (int i=0; i<a.length; i++)

{

int count = 0;

for (int j=0; j<a.length; j++)

if (a[j]==a[i])

count++;

if (count>maxCount)

{

maxCount = count;

mode = a[i];

}

}

return mode;

}

This code loops through the entire array, element by element, and for each element, it counts how many other array elements are equal to it. The algorithm keeps track of the current maximum as it loops through the array, updating it as necessary.

To analyze the algorithm, we notice that it loops through all n elements of the array, and for each of those n times through the loop, the algorithm performs another loop through the entire array. For each of those we do a comparison, for a total of n2 comparisons. Note that each comparison is a fast operation that has no dependence on the size of the array. Therefore, the running time of this algorithm is on the order of n2. This is a rough estimate. The actual running time is some function of the form cn2+d, where c and d are constants. While knowing those constants can be useful, we are not concerned with that here. We are only concerned with the n2 part of the formula. We say that this is an O(n2) algorithm. The big O stands for “order of,” and this read as “big O of n2” or just “O n2.”

The n2 tells us how the running time changes as the number of elements increases. For a 100-element array, n2 is 10,000. For a 1000-element array, n2 is 1,000,000. For a 10,000-element array, n2 is 100,000,000. The key to this big O notation is that it doesn't tell us the exact running time, but instead it captures how the running time grows as the parameter varies.

Consider now a different algorithm for finding the mode: For simplicity's sake, suppose that the array contains only integers between 0 and 999. Create an array called counts that keeps track of how many zeros, ones, twos, etc. are in the array. Loop through the array once, updating the counts array each time through the loop. Then loop through the counts array to find the maximum count. Here is the code:

public static int mode(int[] a)

{

int[] counts = new int[1000];

for (int i=0; i<a.length; i++)

counts[a[i]]++;

int maxCount=0, mode=0;

for (int i=0; i<counts.length; i++)

if (counts[i]>maxCount)

{

maxCount = counts[i];

mode = i;

}

return mode;

}

The first loop runs n times, doing a quick assignment at each step. The second loop runs 1000 times, regardless of the size of the array. So the running time of the algorithm is of the form an+b for some constants c and d. Here the growth the function is linear instead of quadratic, n instead of n2. We say this is O(n). Whereas the quadratic algorithm would require on the order of an unmanageable one trillion operations for a million-element array, this linear algorithm will require on the order of one million operations.

We note something here that happens quite often. The second algorithm trades memory for speed. It runs a lot faster than the first algorithm, but it requires more memory to do so (an array of 1000 counts versus a single counting variable). It often happens that we can speed up an algorithm at the cost of using more memory.

Comparison of two searching algorithms

Let's examine another problem, that of determining whether an array contains a given element. Here is a simple search:

public static boolean linearSearch(int[] a, int item)

{

for (int i=0; i<a.length; i++)

if (a[i]==item)

return true;

return false;

}

In the best-case scenario, the element we are searching for (called item) will be the first element of the array. But algorithms are usually not judged on their best-case performance. They are judged on average-case or worst-case performance. For this algorithm, the worst case occurs if the item is not in the array or is the last element in the array. In this case, the loop will run n times, where n, the parameter of interest, is the size of the array. So the worst case running time is O(n). Moreover, on average, we will have to search through about half of the elements (n/2 of them) before finding item. So the average case running time is O(n) also. Remember that we don't care about constants; we just care about the variables. So instead of O(n/2), we just say O(n) here.

Here is another algorithm for this problem, called a binary search. It runs much faster than the previous algorithm, though it does require that the array be sorted.

public static boolean binarySearch(int[] a, int item)

{

int start=0, end=a.length-1;

while(end >= start)

{

int mid = start + ((end - start) / 2);

if (a[mid] == item)

return true;

if (a[mid] > item)

end = mid-1;

else

start = mid+1;

}

return false;

}

The way the algorithm works is we start by examining the element in the middle of the array and comparing it to the item. If it actually equals item, then we're done. Otherwise, if the middle element is greater than item, then we know that since the elements are in order, then item must not be in the second half of the array. So we just have to search through the first half of the array in that case. On the other hand, if the middle element is less than item, then we just have to search the second half of the array. We repeat this process on the appropriate half of the array, and repeat again as necessary until we home in on the location of the item or decide it isn't in the array.

This is like searching through an alphabetical list of names for a specific name, say Ralph. We might start by looking in the middle of the list for Ralph. If the middle element is Lewis, then we know that Ralph must be in the second half of the list, so we just look the second half. We then look at the middle element of that list and throw out the appropriate half of that list. We keep that up as long as necessary.

Each time through the algorithm, we cut the search space in half. For instance, if we have 1000 elements to search through, after throwing away the appropriate half, we cut the search space down to 500. We then cut it in half again to 250, and then to 125, 63, 32, 16, 8, 4, 2, and 1. So in the worst case, it will take only 10 steps.

Suppose we have one million elements to search through. Repeatedly cutting things in half, we get 500,000, 250,000, 125,000, 63,000, 32,000, 16,000, 8000, 4000, 2000, 1000, …, 4, 2, 1, for a total of 20 steps. So increasing the array from 1000 to 1,000,000 elements only adds 11 steps. If we have one billion elements to search through, only 30 steps are required. With one trillion elements, only 40 steps are needed.

This is the hallmark of a logarithmic running time—multiplying the size of the search space by a certain factor adds a constant amount of steps to the running time. The binary search has an O(log n) running time.

Brief review of logarithms

Logarithms are for when we want to know what power to raise a number to in order to get another number. For example, if we want to know to what power we have to raise 2 to get 55, we can write that in equation form as 2x=55. The solution is x=log2(55), where log2 is called the logarithm base 2. In general, x=logb(a) is the solution to bx = a.

For example, log2(8) is 3 because 23=8. Similarly, log2(16) is 4 because 24=16. And we have that log2(14) is about 3.807 (using a calculator). We can use bases other than 2. For example, log5(25)=2 because 52=25, and log3(1/27)=-3 because 3-3=1/27. In computer science, the most important base is 2. In calculus, the most important base is base e=2.718…, which gives the natural logarithm. Instead of loge(x) people usually write ln(x).

One particularly useful rule is that for any base b, we have logb(x) = ln(x)/ln(b). So for example, log2(14)=ln(14)/ln(2). This is useful because most calculators and many programming languages have ln(x) built in but not log2(x).

With the binary search, we cut our search space in half at each step. This can keep going until the search space is reduced to only one item. So if we start with 1000 items, we cut things in half 10 times to get down to 1. We can get this by computing log2(1000) ≈ 9.96 and rounding up to get 10. This is because asking how many times we have to cut things in half starting at 1000 to get to 1 is the same as asking how many times we have to double things starting at 1 to get 1000, which is the same as asking what power of 2 1000 is. So this is where the logarithm comes from in the binary search.

Big O notation

Say we fix a parameter n (like array size or string length) that is important to the running time of algorithm. When we say that the running time of an algorithm is O(f(n)) (where f(n) is a function like n2 or log n), we mean that the running time grows roughly like the function f(n) as n gets larger and larger.

At this point, it's most important to have a good intuitive understanding of what big O notation means, but it is also helpful to have a formal definition. The reason for this is to clear up any ambiguities in case our intuition fails us. Formally, we say that a function g(n) is O(f(n)) if there exist a real number M and an integer N such that g(n)≤ Mf(n) for all n≥ N.

Put into plainer (but slightly more ambiguous) English, when we say that an algorithm's running time is O(f(n)), we are saying that it takes no more time than a constant multiple of f(n) for large enough values of n. Roughly speaking, this means that a function which is O(f(n)) is of the following form:

cf(n) + [terms that are small compared to f(n) when n is sufficiently large].

For example, 4n2+3n+2 is O(n2). For large values of n, the dominant term is 4n2, a constant times n2. For large n, the other terms, 3n and 2 are small compared to n2. Some other functions that are O(n2) include .5n2, n2+2n+1/n, and 3n2+1000.

As another example, 10 · 2n+n5+n2 is O(2n). This is because the function is of the form a constant times 2n plus some terms (n5 and n2), which are small compared to 2n when n is sufficiently large.

A good rule of thumb for writing a function in big O notation is to drop all the terms except the most dominant one and to ignore any constants.

Examples

In this section we will see how to determine big O running times of segments of code. The basic rules are below. Assume the parameter of interest is n.

- If something has no dependence on n, then it runs in O(1) time.

- A loop that runs n times contributes a factor of n to the running time.

- If loops are nested, then multiply their running times.

- If one loop follows another, then add their running times.

- Logarithms tend to come in when the loop variable is being multiplied or divided by some factor.

We are only looking at the most important parts. For instance if we have a loop followed by a couple of simple assignment statements, it's the loop that contributes the most to the running time, and the assignment statements are negligible in comparison. However, be careful because sometimes a simple statement may contain a call to a function and that function may take a significant amount of time.

Example 1

Here is code that sums up the entries in an array:

public static int sum(int[] a)

{

int total = 0;

for (int i=0; i<a.length; i++)

total += a[i];

return total;

}

This is an O(n) algorithm, where n is the length of the array. The total=0 line and the return line contribute a constant amount of time, while the loop runs n times with a constant-time operation happening inside it. The exact running time would be something like cn+d, with the d part of the running time coming from the constant amount of time it takes to create and initialize the variable total and return the result. The c part comes from the constant amount of time it takes to update the total, and as that operation is repeated n times, we get the cn term. Keeping only the dominant term, cn, and ignoring the constant, we see that, overall, this is an O(n) algorithm.

Example 2

Here is some code involving nested for loops:

public static int func(int[] a)

{

for (int i=0; i<a.length; i++

for (int j=0; j<a.length; j++)

System.out.println(a[i]+a[j]);

}

The outer loop runs n times and the inner loop runs n times for each of run of the outer loop, for a total of n · n = n2 times through the loop. The print statement takes a constant amount of time. So this is an O(n2) algorithm.

Example 3

The following code consists of two unnested for loops.

int count=0, count2=0;

for (int i=0; i<a.length; i++)

count++;

for (int i=0; i<a.length; i++)

count2+=2;

This is an O(n) algorithm. We are summing the running times of two loops that each run in O(n) time. It's a little like saying n + n, which is 2n, and we ignore constants.

Example 4

Consider the following code:

c = 0;

stop = 100;

for (int i=0; i<stop; i++)

c+=a[i];

The running time does not depend on the length of the array a. The code will take essentially the same amount of time, regardless of the size of a. This is therefore an O(1) algorithm. The 1 stands for the constant function f(n)=1.

Example 5

Here is a short code segment that doesn't do anything particularly interesting.

public static int func(int n)

{

int sum=0;

while (n>1)

{

sum += n;

n /= 2;

}

return sum;

}

This is an O(log n) algorithm. We notice that at each step n is cut in half and the algorithm terminates when n=1. Nothing else of consequence happens inside the loop, so the number of times the loop runs is determined by how many times we can cut n in half before we get to 1, which is log n times.

Example 6

Here is a related example:

public static int func(int n)

{

for (int i=0; i<n; i++)

{

int sum=0;

int x=n;

while (x>1)

{

sum += x;

x /= 2;

}

}

return sum;

}

This example contains, more or less, the same code as the previous example wrapped inside of a for loop that runs n times. So each of those n times through the loop, the inside O(log n) code is run, for a total running time given by O(n log n).

Example 7

Below is a simple sorting algorithm that is a variation of Selection Sort.

public static int sort(int[] a)

{

for (int i=0; i<a.length-1; i++)

{

for (int j=i; j<a.length; j++)

{

if (a[i]>a[j])

{

int hold = a[i];

a[i] = a[j];

a[j] = hold;

}

}

}

}

This is a O(n2) algorithm. The outer loop runs n-1 times, where n is the size of the array. The inner loop varies in how many times it runs, running n times when i is 0, n-1 times when i is 1, n-2 times when i is 2, etc., down to 1 time when i is n-2. On average it runs n/2 times. So both loops are O(n) loops, and since we have nested loops, we multiply to get O(n2).

A catalog of common running times

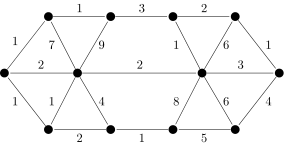

In this section we look at some of the common functions that are used with big O notation. To get a good feel for the functions, it helps to compare them side by side. The table below compares the values of several common functions for varying values of n.

| 1 | 10 | 100 | 1000 | 10000 | |

|---|---|---|---|---|---|

| 1 | 1 | 1 | 1 | 1 | 1 |

| log(n) | 0 | 3.3 | 6.6 | 10.0 | 13.3 |

| n | 1 | 10 | 100 | 1000 | 10,000 |

| n log(n) | 0 | 33 | 664 | 9966 | 132,877 |

| n2 | 1 | 100 | 10,000 | 1,000,000 | 100,000,000 |

| n3 | 1 | 1000 | 1,000,000 | 1,000,000,000 | 1012 |

| 2n | 2 | 1024 | 1.2 × 1030 | 1.1× 10301 | 2.0 × 103010 |

| n! | 1 | 3,628,800 | 9.3 × 10157 | 4.0× 102567 | 2.8 × 1035559 |

Constant time — O(1)

The first entry in the table above corresponds to the constant function f(n)=1. This is a function whose value is always 1, no matter what n is.

| 1 | 10 | 100 | 1000 | 10000 | |

| 1 | 1 | 1 | 1 | 1 | 1 |

Functions that are O(1) are of the form c+[terms small compared to c] for some constant c. In practice, O(1) algorithms are usually ones whose running time does not depend on the size of the parameter. For example, consider a function that returns the minimum of an array of integers. If the array is sorted, then the function simply has to return the first element of the array. This operation takes practically the same amount of time whether the array has 10 elements or 10 million elements.

Logarithmic time — O(log n)

In the table below, and throughout the book, log refers to log2. In other books you may see lg for log2.

| 1 | 10 | 100 | 1000 | 10000 | |

| log n | 0 | 3.3 | 6.6 | 10 | 13.3 |

The key here is that a multiplicative increase in n corresponds to only a constant increase in the running time. For example, suppose in the table above the values of n are the size of an array and the table entries are running times of an algorithm in seconds. We see then that if we make the array 10 times as large, the running time will increase by 3.3 seconds. Multiplying the array size by a factor of 1000 increases the running time by 10 seconds, as in the table below:

| 103 | 106 | 109 | 1012 | 1015 | |

| log n | 10 | 20 | 30 | 40 | 50 |

We see that even with a ridiculous array size of 1 quadrillion elements (over 1000 terabytes worth of memory), the running time of a logarithmic algorithm will only increase by a factor of 5 over a 1000-element array, from 10 seconds to 50 seconds.

We have seen already that the binary search runs in logarithmic time. The key to that is that we are constantly cutting the search space in half. In general, when we are cutting the amount of work in half (or by some other percentage) at each step, that is where logarithms come in.

Linear time — O(n)

Linear time is one of the easier ones to grasp. It is what we are most familiar with in everyday life.

| 1 | 10 | 100 | 1000 | 10000 | |

| n | 1 | 10 | 100 | 1000 | 10000 |

Linear growth means that a constant change in the input results in a constant change in the output. For instance, if you get paid a fixed amount per hour, the amount of money you earn grows linearly with the number of hours you work—work twice as long, earn twice as much; work three times as long, earn three times as much; etc.

Some example linear algorithms include summing up the elements in an array and counting the number of occurrences of an element in an array.

Loglinear time — O(n log n)

Loglinear (or linarithmic) growth is a combination of linear and logarithmic growth. It is like linear growth of the form cn+d except that the constant c is not really constant, but slowly growing. For comparison, the table below compares linear and loglinear growth.

| 1 | 10 | 100 | 1000 | 10000 | |

| 3.3n | 3.3 | 33 | 330 | 3300 | 33,000 |

| n log n | 0 | 33 | 664 | 9966 | 132,877 |

Loglinear growth often occurs as a combination of a linear and logarithmic algorithm. For instance, say we want to find all the words in a word list that are real words when written backwards. The linear part of the algorithm is that we have to loop once through the entire list. The logarithmic part of the algorithm is that for each word, we perform a binary search (assuming the list is alphabetized) to see if the backwards word is in the list. So overall, we perform n searches that take O(log n) time, giving us an O(n log n) algorithm.

Many of the sorting algorithms we will see in the chapter on sorting are loglinear.

Quadratic time — O(n2)

Quadratic growth is a little less familiar in everyday life than linear time, but it shows up a lot in practice.

| 1 | 10 | 100 | 1000 | 10000 | |

| n2 | 1 | 100 | 10,000 | 1,000,000 | 100000,000 |

With quadratic growth, doubling the input size quadruples the running time. Tripling the input size corresponds to a ninefold increase in running time. As shown in the table, increasing the input size by a factor of ten corresponds to increasing the running time by a factor of 100.

One place quadratic growth shows up is in operations on two-dimensional arrays as an n × n array has n2 elements. An other example is removing duplicates from an unsorted list. To do this, we loop over all elements and then for each element, loop over list to see if it is in there. Since the list is unsorted, the binary search can't be used, so we must use a linear search giving us a running time on the order of n · n = n2.

There are a lot of cases, like with the mode algorithms from earlier in the chapter, where the first algorithm you think of may be O(n2), but with some refinement or a more clever approach, things can be improved to O(n log n) or O(n) (or better).

Polynomial time in general — O(np)

Linear and quadratic growth are two examples of polynomial growth, growth of the form O(np), where p can be any positive power. Linear growth corresponds to p=1 and quadratic to p=2. There are many common algorithms where p is between 1 and 2. Values larger than 2 also occur fairly often. For example, the ordinary algorithm for multiplying two matrices is O(n3), though there are other algorithms that run as low as about O(n2.37).

Exponential time — O(2n)

With exponential time, a constant increase in n translates to a multiplicative increase in running time. For example, consider 2n:

| 1 | 2 | 3 | 4 | 5 | |

| 2n | 2 | 4 | 8 | 16 | 32 |

We see that each time n increases by 1, the running time doubles. Exponential growth is far faster than polynomial growth. While a polynomial may start out faster than an exponential, an exponential will always eventually overtake a polynomial. The table below shows just how much faster exponential growth can be than polynomial growth.

| 1 | 10 | 100 | 1000 | 10000 | |

| n3 | 1 | 1000 | 1,000,000 | 1,000,000,000 | 1012 |

| 2n | 2 | 1024 | 1.2 × 1030 | 1.1 × 10301 | 2.0 × 103010 |

Already for n=100, the running time (if measured in seconds) is so large as to be totally impossible to ever compute.

There is a old riddle that demonstrates exponential growth. It boils down to this: would you rather be paid $1000 every day for a month or be given a penny on the first day of the month that is doubled to 2 cents the second day, doubled again to 4 cents the third day, and so on, doubling every day for the whole month? The first way translates to $30,000 at the end of the month. The second way translates to 229/100 dollars, i.e, $5,368,709.12. If we were to keep doubling for another 30 days, it would amount to over 5 quadrillion dollars.

Exponential growth shows up in many places. For example, a lot of times computing something by a brute force check of all the possibilities will lead to an exponential algorithm. As another example, Moore's law, which has proven remarkably accurate, says that the number of transistors that can fit on a chip doubles every 18 months. That is, a constant increase in time (18 months) translates to a multiplicative increase of two in the number of transistors. This continuous doubling has meant that the number of transistors that can fit on a chip has increased from a few thousand in 1970 to over a billion by 2010.

Exponential growth is the opposite of logarithmic growth (exponentials and logs are inverse functions of each other). Whereas for logarithmic growth (say log2 n) an increase of ten times in n translates to only a constant increase of 3.3 in the running time, for exponential growth (say 2n) a constant increase of 3.3 in n translates to a ten times increase in running time.

Factorial time — O(n!)

The factorial n! is defined as n · (n-1) · (n-2) … 2· 1. For example, 5!=5 · 4 · 3 · 2 · 1 = 120. With exponential growth the running time usually grows so fast as to make things intractable for all but the smallest values of n. Factorial growth is even worse. See the table below for a comparison.

| 1 | 10 | 100 | 1000 | 10000 | |

| n3 | 1 | 1000 | 1,000,000 | 1,000,000,000 | 1012 |

| 2n | 2 | 1024 | 1.2 × 1030 | 1.1 × 10301 | 2.0 × 103010 |

| n! | 1 | 3,628,800 | 9.3 × 10157 | 4.0 × 102567 | 2.8 × 1035559 |

Just like with exponential growth, factorial growth often shows up when checking all the possibilities in a brute force search, especially when order matters. There are n! rearrangements (permutations) of a set of size n. One famous problem that requires n! steps is a brute force search in the traveling salesman problem. In that problem, a salesman can travel from any city to any other city, and each route has its own cost. The goal is to find cheapest route that visits all the cities exactly once. If there are n cities, then there are n! possible routes (ways to rearrange the cities). A very simple algorithm would be to check all possible routes, and that is an O(n!) algorithm.

Other types of growth

These are just a sampling of the most common types of growth. There are infinitely many other types of growth. For example, beyond n! one can have nn or nn! or nnn.

A few notes about big O notation

Importance of constants

Generally, an O(n) algorithm is preferable to an O(n2) algorithm. But sometimes the O(n2) algorithm can actually run faster in practice. Consider algorithm A that has an exact running time of 10000n seconds and algorithm B that has an exact running time of .001n2 seconds. Then A is O(n) and B is O(n2). Suppose for whatever problem these algorithms are used to solve, n tends to be around 100. Then algorithm A will take around 1,000,000 seconds to run, while algorithm B will take 10 seconds. So the quadratic algorithm is clearly better here. However, once n gets sufficiently large (in this case around 10 million), then algorithm A begins to be better.

An O(n) algorithm will always eventually have a faster running time than an O(n2) algorithm, but it might take awhile before n is large enough for that to happen. And it might happen that value of n is larger than anything that might come up in real life. Big O notation is sometimes called the asymptotic running time, in that it's sort of what you get as you let n tend toward infinity, like you would to find an asymptote.

Big O notation for memory estimation

We have described how to use big O notation in estimating running times. Big O notation is also used in estimating memory usage. For instance, if we say a sorting algorithm uses O(n) extra space, where n is the size of the array being sorted, then we are saying that the algorithm needs an amount of space roughly proportional to the size of the array. For example, the algorithm may need to make one or more copies of the array. On the other hand, if we say the algorithm uses O(1) extra space, then we are saying it needs a constant amount of extra memory. In other words, the amount of extra memory (maybe a few integer variables) does not depend on the size of the array.

Analyzing the big O growth of an algorithm is not the only or best way of analyzing the running time of an algorithm. It just gives a picture of how the running time will grow as the parameter n grows.

Multiple parameters

Sometimes there may be more than one parameter of interest. For instance, we might say that the running time of a string searching algorithm is O(n+k), where n is the size of string and k is the size of the substring being searched for.

Best, worst, and average case

Usually in this book we will be interested in the worst-case analysis. That is, when looking at an algorithm's running time, we are interested in what's the longest it could possibly take. For instance, when searching linearly through an array for something, the worst case would be if the thing being searched for is at the end of the array or is not there at all. People also look at the best case and the average case. The best case, though is often not that interesting as it might not come up that often in practice. The average case is probably the most useful, but it can be tricky to determine. Often (but not always) the average and worst case will end up having the same big O. For instance, with the linear search, in the worst case you have to look through all n elements and in the average case you have to look through half of them, n/2 elements in total. So both have an O(n/2) running time.

Some technicalities

Note that by the formal definition, because of the ≤, a function with running time n or log n or 1 is technically O(n2). This may seem a little odd. There is another notation Θ(f(n)) that captures more of a notion of equality. Formally, we say that a function g(n) is Θ(f(n)) if there exist real numbers m and M and an integer N such that mf(n)≤ g(n)≤ Mf(n) for all n≥ N. With this definition, only functions whose dominant term is n2 are Θ(n2). So if we say something is Θ(n2) we are saying its running time behaves roughly exactly like n2, whereas if we say something is O(n2), we are saying it behaves no worse than n2, which means it could be better (like n or log n).

The big theta notation is closer to the intuitive idea we built up earlier, and probably should be used, but often programmers, mathematicians, and computer scientists will “abuse notation” and use big O when technically they really should be using big theta.

Exercises

- Write the following using big O notation:

- n2+2n+3

- n+log n

- 3+5n2

- 100 · 2n + n!/5

- Give the running times of the following segments of code using big O notation in terms of n=

a.length.-

int sum = 0; for (int i=0; i<a.length; i++) { for (int j=0; j<a.length; j+=2) sum += a[i]*j; } -

int sum = 0; for (int i=0; i<a.length; i++) { for (int j=0; j<100; j+=2) sum += a[i]*j; } -

int sum = 0; System.out.println((a[0]+a[a.length-1])/2.0);

-

int sum = 0; for (int i=0; i<a.length; i++) { for (int j=0; j<a.length; j++) { for (int k=0; i<a.length; k++) sum += a[i]*j; } } -

int i = a.length-1; while (i>=1) { System.out.println(a[i]); i /= 3; } -

for (int i=0; i<1000; i++) System.out.println(Math.pow(a[0], i)); -

int i = a.length-1; while (i >= 1) { a[i] = 0; i /= 3; } for (int i=0; i<a.length; i++) System.out.println(a); -

int i = a.length-1; while (i>=1) { for (int j=0; j<a.length; j++) a[j]+=i; i /= 3; }

-

- Give the running times of the following methods using big O notation in terms of n.

-

public int f(int n) { for (int i=0; i<n; i++) { for (int j=i; j<n; j++) { for (int k=j; k<n; k++) { if (i*i + j*j == k*k) count++; } } } return count; } -

public int f(int n) { int i=0, count=0; while (i<100 && n%5!=0) { i++; count += n; } return count; } -

public int f(int n) { int value = n, count=0; while (value > 1) { value = value / 2; count++; } return count; } -

public int f(int n) { int count=0; for (int i=0; i<n; i++) { int value = n; while (value > 1) { value = value / 2; count++; } } return count; } -

public int f(int n) { int count=0; for (int i=0; i<n; i++) count++; int value = n; while (value > 1) { value = value / 2; count++; } return count; }

-

- Show that logb n is O(log2 n) for any b. This means we don't have to worry about naming a particular base when we say an algorithm runs in O(log n) time.

Lists

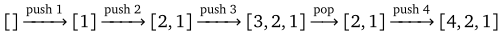

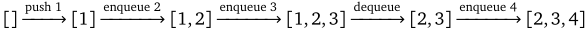

In computer science, a list is a collection of objects, such as [5, 31, 19, 27, 16], where order matters and there may be repeated elements. Each element is referred to by its index in the list. By convention, indices start at 0, so that for the list above, 5 is at index 0, 31 is at index 1, etc.

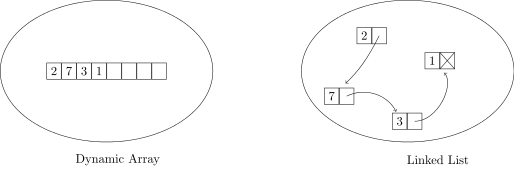

We will consider mutable lists, lists that allows for operations like insertion and deletion of elements. There are two common approaches to implementing lists: dynamic arrays and linked lists. The former uses arrays to represent a list. The latter uses an approach of nodes and links. Each has its benefits and drawbacks, as we shall see.

In the next two sections we will implement lists each way. Our lists will have the following methods:

| Method | Description |

|---|---|

add(value) | adds a new element value to the end of the list |

contains(value) | returns whether value is in the list |

delete(index) | deletes the element at index |

get(index) | returns the value at index |

insert(value, index) | adds a new element value at index |

isEmpty() | returns whether the list is empty |

set(value, index) | sets the value at index to value |

size() | returns how many elements are in the list |

toString() | returns a printable string representing the list |

There are lots of other methods that we can give our lists. See the exercises at the end of the chapter.

Dynamic arrays

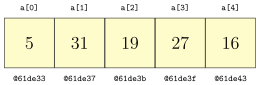

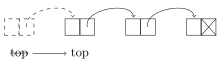

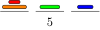

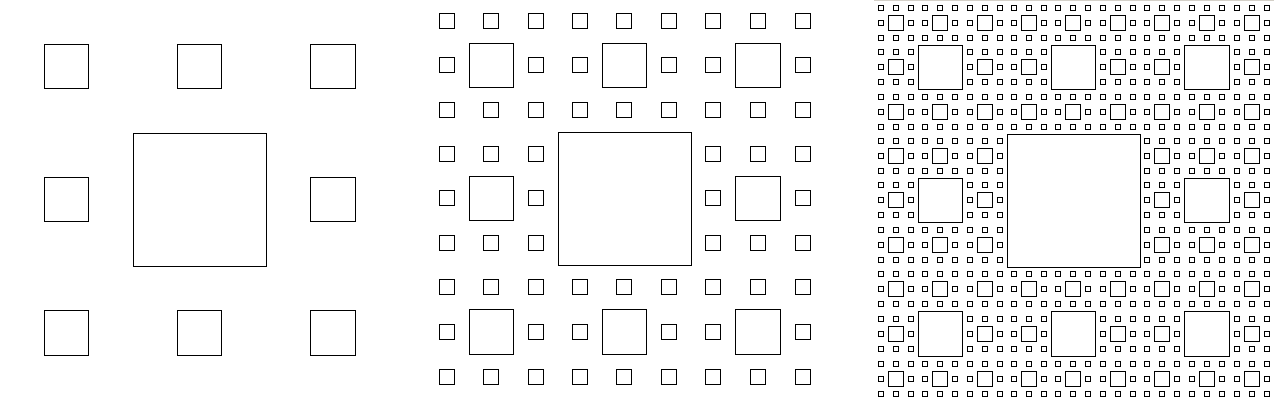

An array is stored in a contiguous block of memory like in the figure below.

In this case, each array element is a 4-byte integer. To get the element at index 3 the compiler knows that it must be 3 × 4 = 12 bytes past the start of the array, which would be location @61de3f. Regardless of the size of the array, a similar quick computation can be used to get the value at any index. This means arrays are fast at random access, accessing a particular element anywhere in the array.

But this contiguous arrangement makes it difficult to insert and delete array elements. Suppose, for example, we want to insert the value 99 at index 2 so that the new array is [5, 99, 31, 19, 27, 16]. To make room, we have to shift most of the elements one slot to the right. This can take a while if the array is large. Moreover, how do we know we have room for another element? There is a chance that the space immediately past the end of the array is being used for something else. If that is the case, then we would have to move the array somewhere else in memory.

One way to deal with running out of room in the array when adding elements is to just make the array really large. The problem with this is how large is large enough? And we can't go around making all our arrays have millions of elements because we would be wasting a lot of memory.

Instead, what is typically done is whenever more space is needed we increase the capacity of the array by a constant factor (say by doubling it), create a new array of that size, and then copy the contents from the old array over into the new one. By doubling the capacity, we ensure that we will only periodically have to incur the expensive operation of creating a new array and moving the contents of the old one over. This type of data structure is called a dynamic array.

Implementing a dynamic array

Our implementation will have three class variables: an array a where we store all the elements, a variable capacity that is the capacity of the array, and a variable numElements that keeps track of how many elements are in the list.

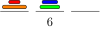

For instance, consider the list [77, 24, 11]. It has three elements, so numElements is 3. However, the capacity of the list will usually be larger so that it has room for additional elements. In the figure below, we show the array with a capacity of 8. We can add five more elements before we have to increase the capacity.

The constructor

The simple constructor below sets the list to have a capacity of 100 elements and sets numElements to 0. This creates an empty list.

public AList()

{

this.capacity = 100;

a = new int[capacity];

numElements = 0;

}

Getting and setting elements

Getting and setting elements of the list is straightforward.

public int get(int index)

{

return a[index];

}

public void set(int index, int value)

{

a[index] = value;

}

size and isEmpty

Returning the size of the list is easy since we have a variable, numElements, keeping track of the size:

public int size()

{

return numElements;

}

Returning whether the list is empty or not is also straightforward—just return true if numElements is 0 and false otherwise. The code below does this in one line.

public boolean isEmpty()

{

return numElements==0;

}

contains

To determine if the list contains an element or not, we can loop through the list, checking each element in turn. If we find the element we're looking for, return true. If we get all the way through the list without finding what we're looking for, then return false.

public boolean contains(int value)

{

for (int i=0; i<numElements; i++)

if (a[i] == value)

return true;

return false;

}

toString

Our toString method returns the elements of the list separated by commas and enclosed in square brackets, like [1,2,3]. The way we do this is to build up a string as we loop through the array. As we encounter each element, we add it along with a comma and a space to the string. When we're through, we have an extra comma and space at the end of the string. The final line below uses the substring method to take all of the string except for the extra comma and space and then add a closing bracket.

There is one place we have to be a little careful, and that is if the list is empty. In that case, the substring call will fail because the ending index will be negative. So we just take care of the empty list separately.

@Override

public String toString()

{

if (numElements == 0)

return "[]";

String s = "[";

for (int i=0; i<numElements; i++)

s += a[i] + ", ";

return s.substring(0, s.length()-2) + "]";

}

Adding and deleting things

First, we will occasionally need to increase the capacity of the list as we run out of room. We have a private method (that won't be visible to users of the class since it is only intended to be used by our methods themselves) that doubles the capacity of the list.

private void enlarge()

{

capacity *= 2;

int[] newArray = new int[capacity];

for (int i=0; i<a.length; i++)

newArray[i] = a[i];

a = newArray;

}

To add an element to a list we first make room, if necessary. Then we set the element right past the end of the list to the desired value and increase numElements by 1.

public void add(int value)

{

if (numElements == capacity)

enlarge();

a[numElements] = value;

numElements++;

}

To delete an element from the list at a specified index we shift everything to the right of that index left by one element and reduce numElements by 1.

public void delete(int index)

{

for (int i=index; i<numElements-1; i++)

a[i] = a[i+1];

numElements--;

}

Finally, we consider inserting an value at a specified index. We start, just like with add, by increasing the capacity if necessary. We then shift everything right by one element to make room for the new element. Then we add in the new element and increase numElements by 1.

public void insert(int value, int index)

{

if (numElements == capacity)

enlarge();

for (int i=numElements; i>index; i--)

a[i] = a[i-1];

a[index] = value;

numElements++;

}

The entire AList class

Here is the entire class.

public class AList

{

private int[] a;

private int numElements;

private int capacity;

public AList()

{

this.capacity = 100;

a = new int[capacity];

numElements = 0;

}

public int get(int index)

{

return a[index];

}

public void set(int index, int value)

{

a[index] = value;

}

public boolean isEmpty()

{

return numElements==0;

}

public int size()

{

return numElements;

}

public boolean contains(int value)

{

for (int i=0; i<numElements; i++)

if (a[i] == value)

return true;

return false;

}

@Override

public String toString()

{

if (numElements == 0)

return "[]";

String s = "[";

for (int i=0; i<numElements; i++)

s += a[i] + ", ";

return s.substring(0,s.length()-2) + "]";

}

private void enlarge()

{

capacity *= 2;

int[] newArray = new int[capacity];

for (int i=0; i<a.length; i++)

newArray[i] = a[i];

a = newArray;

}

public void add(int value)

{

if (numElements == capacity)

enlarge();

a[numElements] = value;

numElements++;

}

public void delete(int index)

{

for (int i=index; i<numElements-1; i++)

a[i] = a[i+1];

numElements--;

}

public void insert(int value, int index)

{

if (numElements == capacity)

enlarge();

for (int i=numElements; i>index; i--)

a[i] = a[i-1];

a[index] = value;

numElements++;

}

}

Using the class

Here is how we would create an object from this class and call a few of its methods:

AList list = new AList(); list.add(2); list.add(3); System.out.println(list.size());

Important notes

A few notes about this class (and most of the others we will develop later in the book): First, this is just the bare bones of a class whose purpose is to demonstrate how dynamic arrays work. If we were going to use this class for something important we should add some error checking. For example, the get and set methods should raise exceptions if the user were to try to get or set an index less than 0 or greater than listSize-1.

Second, after writing code for a class like this, it is important to check edge cases. For example, we should test not only how the insert method works when inserting at the middle of a list, but we should also check how it works when inserting an element at the left or right end or how insertion works on an empty list. We should also check how insert and add work when the list size bumps up against the capacity.

Last, you should probably not use this class for anything important. Java has a dynamic array class called ArrayList that you should use instead. The purpose of the class we built is to help us understand how dynamic arrays work.

Linked lists

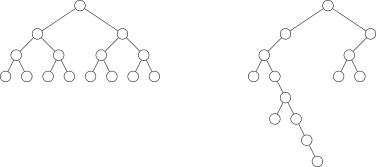

In the dynamic array approach to lists, the key to finding an element at a specific index is that the elements of the array are laid out one after another, so we know where everything is. Each element takes up the same amount of memory and an easy computation tells how far from the start the desired element is.

The problem with this is when we insert or delete things, we have to maintain this property of array elements being all right next to each other. This can involve moving quite a few elements around. Also, once the array backing our list fills up, we have find somewhere else in memory to hold our list and copy all the elements over.

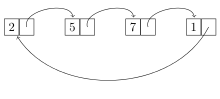

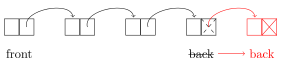

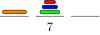

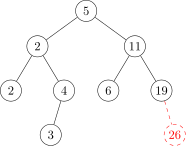

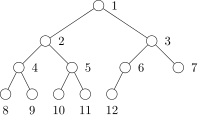

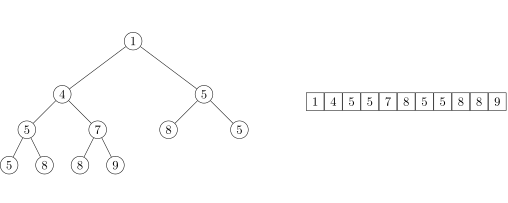

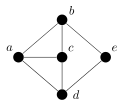

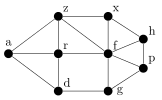

A linked list is a different approach to lists. List elements are allowed to be widely separated in memory. This eliminates the problems mentioned above for dynamic arrays, but then how do we get from one list element to the next? That's what the “link” in linked list is about. Along with each data element in the list, we also have a link, which points to where the next element in the list is located. See the figure below.

The linked list shown above is often drawn like this:

Each item in a linked list is called a node. Each node consists of a data value as well as a link to the next node in the list. The last node's link is null; in other words, it is a link going nowhere. This indicates the end of the list.

Implementing a linked list

Here is the beginning of our linked list class.

Note especially that we create a class called Node to represent a node in the list. The Node class has have two variables, one to hold the node's data, and one for the link to the next node. The tricky part here is the link is a Node object itself, so the class is self-referential.

public class LList

{

private class Node

{

public int data;

public Node next;

public Node(int data, Node next)

{

this.data = data;

this.next = next;

}

}

private Node front;

public LList()

{

front = null;

}

}

There are a few things to note here. First, we have declared Node to be a nested class, a part of the LList class. This is because only the LList class needs to deal with nodes; nothing outside of the LList class needs the Node class. Secondly, notice that the class variables are declared public. We could declare them private and use getters and setters, but it's partly traditional with linked lists, and frankly a little easier, to just make Node's fields public. Since Node is such a small class and it is private to the LList class, this will not cause any problems.

The LList class actually has only one class variable, a Node object called front, which represents the first element in the list. This is all we need. If we want anything further down in the list, we just start at front and keep following links until we get where we want.

The isEmpty, size, and contains methods

Determining if the list is empty is as simple as checking whether the front of the list is set to null.

public boolean isEmpty()

{

return front==null;

}

To find the size of the list, we walk through the list, following links and keeping count, until we get to the end of the list, which is marked by a null link.

public int size()

{

int count = 0;

Node n = front;

while (n != null)

{

count++;

n = n.next;

}

return count;

}

Determining if a value is in the list can be done similarly:

public boolean contains(int value)

{

Node n = front;

while (n != null)

{

if (n.data == value)

return true;

n = n.next;

}

return false;

}

It is worth stopping to understand how we are looping through the list, as most of the other linked list methods will use a similar kind of loop. We start at the front node and move through the list with the line n=n.next, which moves to the next element. We know we've reached the end of the list when our node is equal to null.

To picture a linked list, imagine a phone chain. Phone chains aren't in use much anymore, but the way they used to work is if school or work was canceled because of a snowstorm, the person in charge would call someone and tell them about the cancelation. That person would then call the next person in the chain and tell them. Then that person would call the next person, etc. The process of each person dialing the other person's number and calling them to tell the message is akin to the process we follow in our loops of following links.

And if we imagine that each person in the phone chain only has the next person's phone number, we have the same situation as a linked list, where each node only knows the location of the next node. This is different from arrays, where we know the location of everything. An array would be the equivalent of everyone having phone numbers in order, like 345-0000, 345-0001, 345-0002, and looping through the array would be the equivalent of having a robo-dialer call each of the numbers in order, giving the message to each person.

Getting and setting elements

Getting and setting elements at a specific index is trickier with linked lists than with dynamic list lists. To get an element we have to walk the list, link by link, until we get to the node we want. We use a count variable to keep track of how many nodes we've visited. We will know that we have reached our desired node when the count equals the desired index. Here is the get method:

public int get(int index)

{

int count = 0;

Node n = front;

while (count < index)

{

count++;

n = n.next;

}

return n.data;

}

Setting an element is similar.

public void set(int index, int value)

{

int count = 0;

Node n = front;

while (count < index)

{

count++;

n = n.next;

}

n.data = value;

}

Adding something to the front of the list

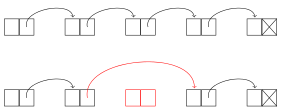

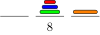

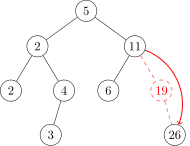

Adding an element to the front of a linked list is very quick. We create a new node that contains the value and point it to the front of the list. This node then becomes our new front, so we set front equal to it. See the figure below.

We can do this all in one line:

public void addToFront(int value)

{

front = new Node(value, front);

}

Adding something to the back of the list

To add to the back of a linked list, we walk the list until we get to the end, create the new node, and set the end node's link to point to the new node. If the list is empty, this won't work, so we instead just set front equal to the new node. See the figure below:

Here is the code:

public void add(int value)

{

if (front == null)

{

front = new Node(value, null);

return;

}

Node n = front;

while (n.next != null)

n = n.next;

n.next = new Node(value, null);

}

We see that adding to the front is O(1), while adding to the end is O(n). We could make adding to the end into O(1) if we have another class variable, back, that keeps track of the last node in the list. This would add a few lines of code to some of the other methods as we would occasionally need to modify the back variable.

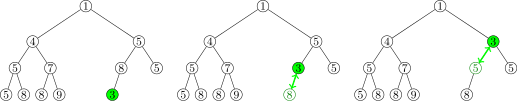

Deleting a node

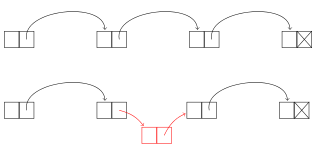

To delete a node, we reroute a link as shown below (the middle node is being deleted):

We first have to get to the node before the one to be deleted. We do this with a loop like we used for the get and set methods, except that we stop right before the deleted node. Once we get there, we can use the following line to reroute around the deleted node:

n.next = n.next.next;

This sets the current node's link to point to its neighbor's link, essentially removing its neighbor from the list. Here is the code of the method. Note the special case for deleting the first element of the list.

public void delete(int index)

{

if (index==0)

{

front = front.next;

return;

}

int count = 0;

Node n = front;

while (count < index-1)

{

count++;

n = n.next;

}

n.next = n.next.next;

}

One other thing that is worth mentioning is that the deleted node is still there in memory but we don't have any way to get to it, so it is essentially gone and forgotten about. Eventually Java's garbage collector will recognize that nothing is pointing to it and recycle its memory for future use.

Inserting a node

To insert a node, we first create the node to be inserted and then reroute a link to put it into the list. The rerouting code is very similar to what we did for deleting a node. Here is how the rerouting works:

To do the insertion, we first loop through the list to get to the place to insert the link. This is just like what we did for the delete method. We can create the new node and reroute in one line:

n.next = new Node(value, n.next);

Here is the code for the insert method.

public void insert(int index, int value)

{

if (index == 0)

{

front = new Node(value, front);

return;

}

int count = 0;

Node n = front;

while (count < index-1)

{

count++;

n = n.next;

}

n.next = new Node(value, n.next);

}

The entire LList class

Here is the entire linked list class:

public class LList

{

private class Node

{

public int data;

public Node next;

public Node(int data, Node next)

{

this.data = data;

this.next = next;

}

}

private Node front;

public LList()

{

front = null;

}

public boolean isEmpty()

{

return front==null;

}

public int size()

{

int count = 0;

Node n = front;

while (n != null)

{

count++;

n = n.next;

}

return count;

}

public int get(int index)

{

int count = 0;

Node n = front;

while (count < index)

{

count++;

n = n.next;

}

return n.data;

}

public void set(int index, int value)

{

int count = 0;

Node n = front;

while (count < index)

{

count++;

n = n.next;

}

n.data = value;

}

public boolean contains(int value)

{

Node n = front;

while (n != null)

{

if (n.data == value)

return true;

n = n.next;

}

return false;

}

@Override

public String toString()

{

if (front == null)

return "[]";

Node n = front;

String s = "[";

while (n != null)

{

s += n.data + ", ";

n = n.next;

}

return s.substring(0, s.length()-2) + "]";

}

public void add(int value)

{

if (front == null)

{

front = new Node(value, null);

return;

}

Node n = front;

while (n.next != null)

n = n.next;

n.next = new Node(value, null);

}

public void addToFront(int value)

{

front = new Node(value, front);

}

public void delete(int index)

{

if (index == 0)

{

front = front.next;

return;

}

int count = 0;

Node n = front;

while (count < index-1)

{

count++;

n = n.next;

}

n.next = n.next.next;

}

public void insert(int index, int value)

{

if (index == 0)

{

front = new Node(value, front);

return;

}

int count = 0;

Node n = front;

while (count < index-1)

{

count++;

n = n.next;

}

n.next = new Node(value, n.next);

}

}

Using the class

Here is how we would create an object from this class and call a few of its methods. This is essentially the same code as we tested the dynamic array class with. Remember that linked lists and dynamic arrays are two ways of implementing the same data structure, a list.

LList list = new LList(); list.add(2); list.add(3); System.out.println(list.size());

Working with linked lists

There are a number of exercises at the end of this chapter that ask you to write additional methods for the linked list class. These are particularly useful exercises for becoming comfortable working with linked lists. Here are a few helpful hints for working on them.

Basics

First, remember the basic idea of a linked list, that each item in the list is a node that contains a data value and a link to the next node in the list. Creating a new node is done with a line like below:

Node n = new Node(19, null);

This creates a node with value 19 and a link that points to nothing. Suppose we want to create a new node that points to another node in the list called m. Then we would do the following:

Node n = new Node(19, m);

To change the link of n to point to something else, say the front of the list, we could do the following:

n.next = front;

Remember that the front marker is a Node variable that keeps track of which node is at the front of the list.

Looping

To move through a linked list, a loop like below is used:

Node n = front;

while (n != null)

n = n.next;

This will loop through the entire list. We could use a counter if we just want to loop to a certain index in the list, like below (looping to index 10):

Node n = front;

int count = 0;

while (count < 10)

{

count++;

n = n.next;

}

Using pictures

Many linked list methods just involve creating some new nodes and moving some links around. To keep everything straight, it can be helpful to draw a picture. For instance, below is the picture we used earlier when describing how to add a new node to the front of a list:

And we translated this picture into the following line:

public void addToFront(int value)

{

front = new Node(value, front);

}

This line does a few things. It creates a new node, points that node to the old front of the list, and moves the front marker to be at this new node.

Edge cases

When working with linked lists, as with many other things, it is important to worry about edge cases. These are special cases like an empty list, a list with one element, deleting the first or last element in a list, etc. Often with linked list code, one case will handle almost everything except for one or two special edge cases that require their own code.

For instance, as we saw earlier, to delete a node from a list, we loop through the list until we get to the node right before the node to be deleted and reroute that node's link around the deleted node. But this won't work if we are deleting the first element of the list. So that's an edge case, and we need a special case to handle that. Here again is the code for the delete method:

public void delete(int index)

{

// edge case for deleting the first element

if (index == 0)

{

front = front.next;

return;

}

// This is the code for deleting any other element

int count = 0;

Node n = front;

while (count < index-1)

{

count++;

n = n.next;

}

n.next = n.next.next;

}

Null pointer exceptions

When working with nodes, there is a good chance of making a mistake and getting a NullPointerException from Java. This usually happens if you use an object without first initializing it. For example, the following will cause a null pointer exception:

Node myNode;

// some code not involving myNode might go here

if (myNode.value == 3)

// some more code goes here...

The correction is to either set myNode equal to some other Node object or initialize it like below:

Node myNode = new Node(42, null);

In summary, if you get a null pointer exception, it usually indicates an object that has been declared but not initialized.

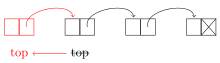

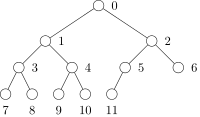

Doubly- and circularly-linked lists

There are a couple of useful variants on linked lists. The first is a circularly-linked list is one where the link of the last node in the list points back to the start of the list, as shown below.

Working with circularly-linked lists is similar to working with ordinary ones except that there's no null link at the end of the list (you might say the list technically has no start and no end, just being a continuous loop). One thing to do if you need to access the “end” of the list is to loop until node.next equals front. However, a key advantage of a circularly-linked list is that you can loop over its elements continuously.

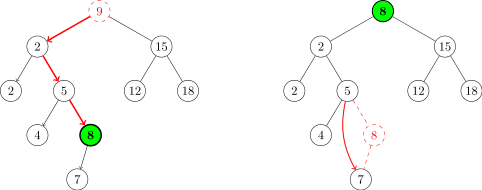

The second variant of a linked list is a doubly-linked list, where each node has two links, one to the next node and one to the previous node, as shown below:

Having two links can be useful for methods that need to know the predecessor of a node or for traversing the list in reverse order. Doubly-linked lists are a little more complex to implement because we now have to keep track of two links for each node. To keep track of all the links, it can be helpful to sketch things out. For instance, here is a sketch useful for adding a node to the front of a doubly-linked list.

We see from the sketch that we need to create a new node whose next link points to the old front and whose prev link is null. We also have to set the prev link of the old front to point to this new node, and then set the front marker to the new node. A special case is needed if the list is empty.

Comparison of dynamic arrays and linked lists

First, let's look at running times of the methods. Let n denote the size of the list.

- Getting/setting a specific index

Dynamic array — O(1). Getting and setting both consist of a single array operation, which translates to a quick memory address calculation at the machine level. The size of the list has no effect on the calculation.

Linked list — O(n). To get or set a specific index requires us to follow links until we get to the desired index. In the worst case, we have to go through all n links (when the index is the end of the list), and on average we have to go through n/2 links.

-

isEmptyDynamic array — O(1). We just check if the class variable

numElementsis 0 or not.Linked list — O(1). We just check if the class variable

frontis equal tonullor not. -

sizeDynamic array — O(1). We just return the value of the class variable

numElements.If we did not have that class variable, then it would be an O(n) operation because we would have to loop through and count how many elements there are. The tradeoff here is that the

insert,delete, andaddmethods have to updatenumElementseach time they are called, adding a little bit of time to each of them. Essentially, by having the class variable, we have spread out thesizemethod's work over all those other methods.Linked list — O(n). Our code must loop through the entire list to count how many elements there are. On the other hand, we could make this O(1) by adding a class variable

numElementsto the list and updating it whenever we add or delete elements, just like we did for our dynamic array class. -

containsDynamic array — O(n). Our code searches through the list, element-by-element, until it finds what it's looking for or gets to the end of the list. If the desired value is at the end of the list, this will take n steps. On average, it will take n/2 steps.

Linked list — O(n). Our linked-list code behaves similarly to the dynamic list code.

- Adding and deleting elements

Dynamic array — First, increasing the capacity of the list is an O(n) operation, but this has to be done only rarely. The rest of the

addmethod is O(1). So overall, adding is said to be amortized O(1), which is to say that most of the time it adding is an O(1) operation, but it can on rare occasions be O(n).On the other hand, inserting or deleting an element in the middle of the list is O(n) on average because we have to move around big chunks of the array either to make room or to make sure the elements stay contiguous.

Linked list — The actual operation of inserting or deleting a node is an O(1) operation as it just involves moving around a link and creating a new node (when inserting). However, we have to walk through the list to get to the location where the insertion or deletion happens, and this takes O(n) operations on average. So, the way we've implemented things, a single insert or delete will run in O(n) time.

However, once you're at the proper location, inserting or deleting is very quick. Consider a

removeAllmethod that removes all occurrences of a specific value. To implement this we could walk through the list, deleting each occurrence of the value as we encounter it. Since we are already walking through the list, no further searching is needed to find a node and the deletions will all be O(1) operations. SoremoveAllwill run in O(n) time.Also, inserting or deleting at the front of the list is an O(1) operation as we don't have to go anywhere to find the front. And, as noted earlier, adding to a list is currently O(n) because we have to walk through the entire list to get there, but it can be made into O(1) operation if we add another class variable to keep track of the end of the list.

In terms of which is better—dynamic arrays or linked lists—there is no definite answer as they each have their uses. In fact, for most applications either will work fine. For applications where speed is critical, dynamic arrays are better when a lot of random access is involved, whereas linked lists can be better when it comes to insertions and deletions. In terms of memory usage, either one is fine for most applications. For really large lists, remember that linked lists require extra memory for all the links, an extra five or six bytes per list element. Dynamic arrays have their own potential problems in that not every space allocated for the array is used, and for really large arrays, finding a contiguous block of memory could be tricky.

We also have to consider cache performance. Not all RAM is created equal. RAM access can be slow, so modern processors include a small amount cache memory that is faster to access than regular RAM. Arrays, occupying contiguous chunks of memory, generally give better cache performance than linked lists whose nodes can be spread out in memory. Chunks of the array can be copied into the cache, making it faster to iterate through a dynamic array than a linked list.

Making the linked list class generic

The linked list class we created in this chapter works only for integer lists. We could modify it to work with strings by going through and replacing the int declarations with String declarations, but that would be tedious. And then if we wanted to modify it to work with doubles or longs or some object, we would have to do the same tedious replacements all over again. It would be nice if we could modify the class once so that it works with any type. This is where Java's generics come in.

The process of making a class work with generics is pretty straightforward. We will add a little syntax to the class to indicate we are using generics and then replace the int declarations with T declarations. The name T, short for “type,” acts as a placeholder for a generic type. (You can use other names instead of T, but the T is somewhat standard.)

First, we have to introduce the generic type in the class declaration:

public class LList<T>

The slanted brackets are used to indicate a generic type. After that, the only thing we have to do is anywhere we refer to the data type of the values stored in the list, we have to change it from int to T. For example, the get method changes as shown below:

public int get(int index) public T get(int index)

{ {

int count = 0; int count = 0;

Node n = front; Node n = front;

while (count < index) while (count < index)

{ {

count++; count++;

n = n.next; n = n.next;

} }

return n.data; return n.data;

} }

Notice that the return type of the method is now type T instead of int. Notice also that index stays an int. Indices are still integers, so they don't change. It's only the data type stored in the list that changes to type T.

One other small change is that when comparing generic types, using == doesn't work anymore, so we have to replace that with a call to .equals(), just like when comparing strings in Java.

Here is the entire class:

public class LList<T>

{

private class Node

{

public T data;

public Node next;

public Node(T data, Node next)

{

this.data = data;

this.next = next;

}

}

private Node front;

public LList()

{

front = null;

}

public boolean isEmpty()

{

return front==null;

}

public int size()

{

int count = 0;

Node n = front;

while (n != null)

{

count++;

n = n.next;

}

return count;

}

public T get(int index)

{

int count = 0;

Node n = front;

while (count < index)

{

count++;

n = n.next;

}

return n.data;

}

public void set(int index, T value)

{

int count = 0;

Node n = front;

while (count < index)

{

count++;

n = n.next;

}

n.data = value;

}

public boolean contains(T value)

{

Node n = front;

while (n != null)

{

if (n.data.equals(value))

return true;

n = n.next;

}

return false;

}

@Override

public String toString()

{

if (front == null)

return "[]";

Node n = front;

String s = "[";

while (n != null)

{

s += n.data + ", ";

n = n.next;

}

return s.substring(0, s.length()-2) + "]";

}

public void add(T value)

{

if (front == null)

{

front = new Node(value, null);

return;

}

Node n = front;

while (n.next != null)

n = n.next;

n.next = new Node(value, null);

}

public void addToFront(T value)

{

front = new Node(value, front);

}

public void delete(int index)

{

if (index == 0)

{

front = front.next;

return;

}

int count = 0;

Node n = front;

while (count < index-1)

{

count++;

n = n.next;

}

n.next = n.next.next;

}

public void insert(int index, T value)

{

if (index == 0)

{

front = new Node(value, front);

return;

}

int count = 0;

Node n = front;

while (count < index-1)

{

count++;

n = n.next;

}

n.next = new Node(value, n.next);

}

}

Here is an example of the class in action:

LList<String> list = new LList<String>();

list.add("first");

list.add("second");

System.out.println(list);

And the output is

[first, second]

The Java Collections Framework

While it is instructive to write our own list classes, it is best to use the ones provided by Java for most practical applications. Java's list classes have been extensively tested and optimized, whereas ours are mostly designed to help us understand how the two types of lists work. On the other hand, it's nice to know that if we need something slightly different from what Java provides, we could code it.

Java's Collections Framework contains, among many other things, an interface called List. There are two classes implementing it: a dynamic array class called ArrayList and and a linked list class called LinkedList.

Here are some sample declarations:

List<Integer> list = new ArrayList<Integer>(); List<Integer> list = new LinkedList<Integer>();

The Integer in the slanted braces indicates the data type that the list will hold. This is an example of Java generics. We can put any class name here. Here are some examples of other types of lists we could have:

List<String> | list of strings |

List<Double> | list of doubles |

List<Card> | list of Card objects (assuming you've created a Card class) |

List<List<Integer>> | list of integer lists |

List methods

Here are the most useful methods of the List interface. They are available to both ArrayLists and LinkedLists.

| Method | Description |

|---|---|

add(x) | adds x to the list |

add(i,x) | adds x to the list at index i |

contains(x) | returns whether x is in the list |

equals(a) | returns whether the list equals a |

get(i) | returns the value at index i |

indexOf(x) | returns the first index (location) of x in the list |

isEmpty() | returns whether the list is empty |

lastIndexOf(x) | like indexOf, but returns the last index |

remove(i) | removes the item at index i |

remove(x) | removes the first occurrence of the object x |

removeAll(x) | removes all occurrences of x |

set(i,x) | sets the value at index i to x |

size() | returns the number of elements in the list |

subList(i,j) | returns a slice of a list from index i to j-1, sort of like substring |

Methods of java.util.Collections

There are some useful static Collections methods that operate on lists (and, in some cases, other Collections objects that we will see later):

| Method | Description |

|---|---|

addAll(a, x1,..., xn) | adds x1, x2, …, xn to the list a |

binarySearch(a,x) | returns whether x is in the collection a |

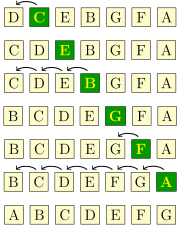

frequency(a,x) | returns a count of how many times x occurs in a |